import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.model_selection import train_test_split, GridSearchCV

from sklearn.tree import DecisionTreeClassifier, plot_tree

from sklearn.preprocessing import StandardScaler

from sklearn.metrics import confusion_matrix, ConfusionMatrixDisplay

from sklearn.metrics import accuracy_score, recall_score, precision_scoreClassification Trees

IN5148: Statistics and Data Science with Applications in Engineering

Department of Industrial Engineering

Agenda

- Introduction

- Classification Trees

- Class-Specific Metrics

Introduction

Load the libraries

Before we start, let’s import the data science libraries into Python.

Here, we use specific functions from the pandas, matplotlib, seaborn and sklearn libraries in Python.

Bayes classifier

It turns out that, if we know the true structure of \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\), we can build a good classification function called the Bayes classifier:

\[C(\boldsymbol{X}) = \begin{cases} 1, & \text{if}\ P(Y = 1 | \boldsymbol{X} = \boldsymbol{x}) > 0.5 \\ 0, & \text{if}\ P(Y = 1 | \boldsymbol{X} = \boldsymbol{x}) \leq 0.5 \end{cases}.\]

This function classifies to the most probable class using the conditional distribution \(P(Y | \boldsymbol{X} = \boldsymbol{x})\).

HOWEVER, we don’t (and will never) know the true form of \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\)!

To overcome this issue, we several methods:

- K-Nearest Neighbours: Estimate \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\) directly. Already covered.

- Classification Trees: Estimate \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\) directly. What we will cover today.

- Ensemble methods: Estimate \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\) directly. Later.

Once we estimate \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\) using one of these methods, we plug it into the Bayes classifier:

\[\hat{C}(\boldsymbol{X}) = \begin{cases} 1, & \text{if}\ \hat{P}(Y = 1 | \boldsymbol{X} = \boldsymbol{x}) > 0.5 \\ 0, & \text{if}\ \hat{P}(Y = 1 | \boldsymbol{X} = \boldsymbol{x}) \leq 0.5 \end{cases}.\]

where

- \(\hat{P}(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\) is an estimate of \(P(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\).

- \(\hat{C}(\boldsymbol{X})\) is an estimate of the true Bayes Classifier \(C(\boldsymbol{X})\)

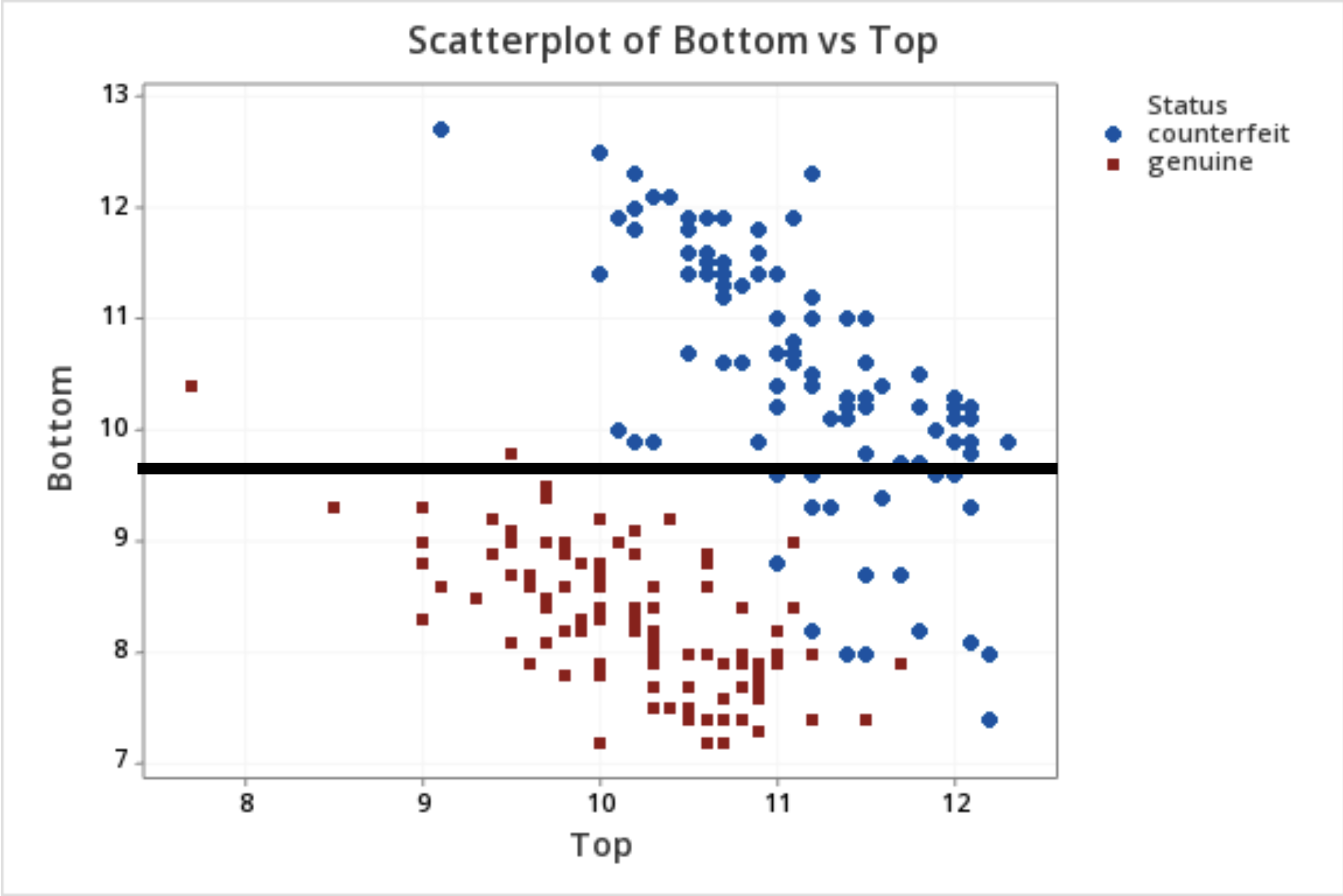

Example 1: Identifying Counterfeit Banknotes

Dataset

The data is located in the file “banknotes.xlsx”.

| Status | Left | Right | Bottom | Top | |

|---|---|---|---|---|---|

| 0 | genuine | 131.0 | 131.1 | 9.0 | 9.7 |

| 1 | genuine | 129.7 | 129.7 | 8.1 | 9.5 |

| 2 | genuine | 129.7 | 129.7 | 8.7 | 9.6 |

| 3 | genuine | 129.7 | 129.6 | 7.5 | 10.4 |

| 4 | genuine | 129.6 | 129.7 | 10.4 | 7.7 |

Create the objects

We use the function .filter() from pandas to select two predictors: Top and Bottom.

We also set the target category.

Let’s partition the dataset

Thanks to the

stratifyparameter, the function makes a clever partition of the data using the empirical distribution of the response.Usually, the proportion of the dataset that goes to the validation set is 20% or 30%.

Classification Trees

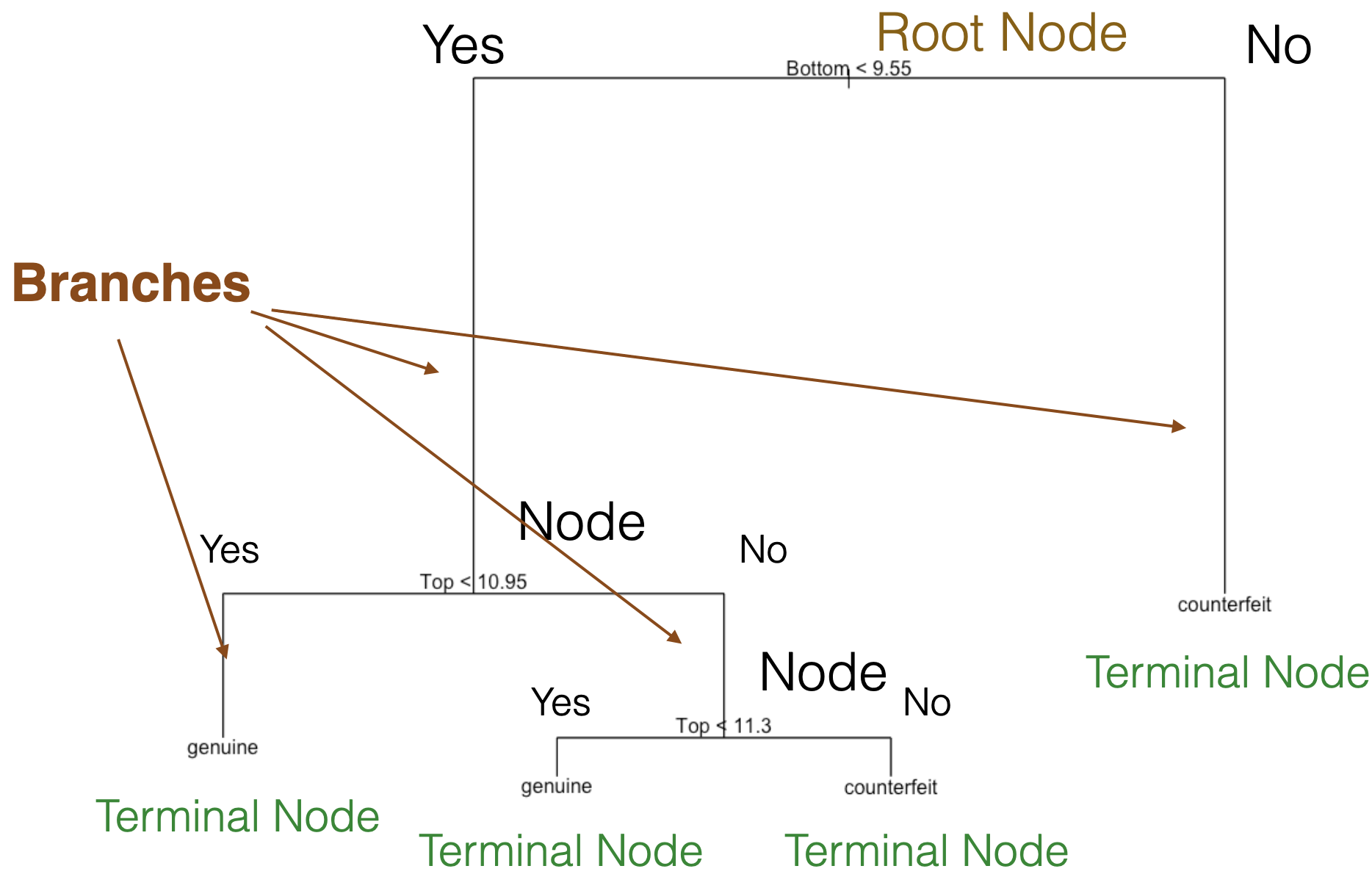

Decision tree

It is a supervised learning algorithm that predicts or classifies observations using a hierarchical tree structure.

Main characteristics:

Simple and useful for interpretation.

Can handle numerical and categorical predictors and responses.

Computationally efficient.

Nonparametric technique.

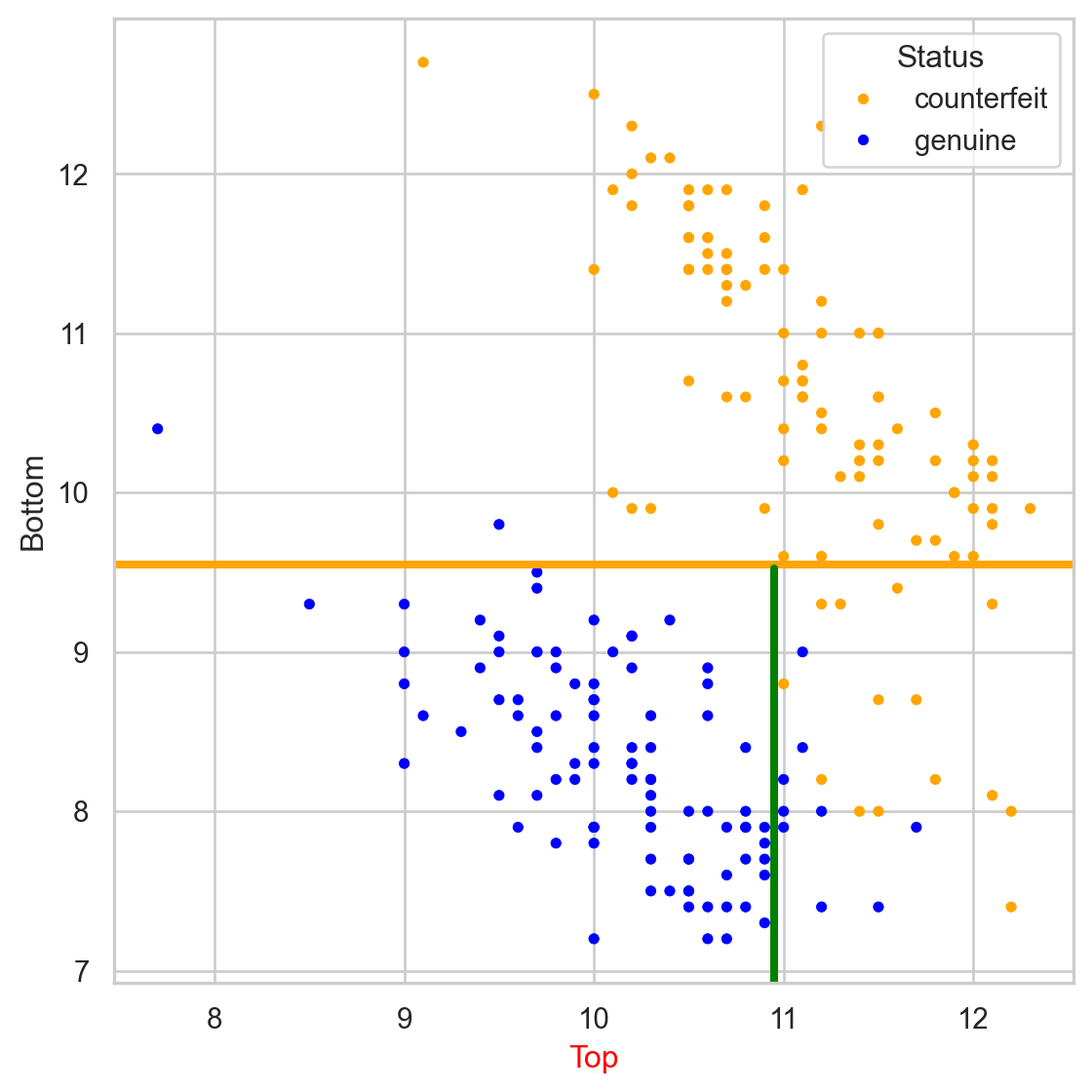

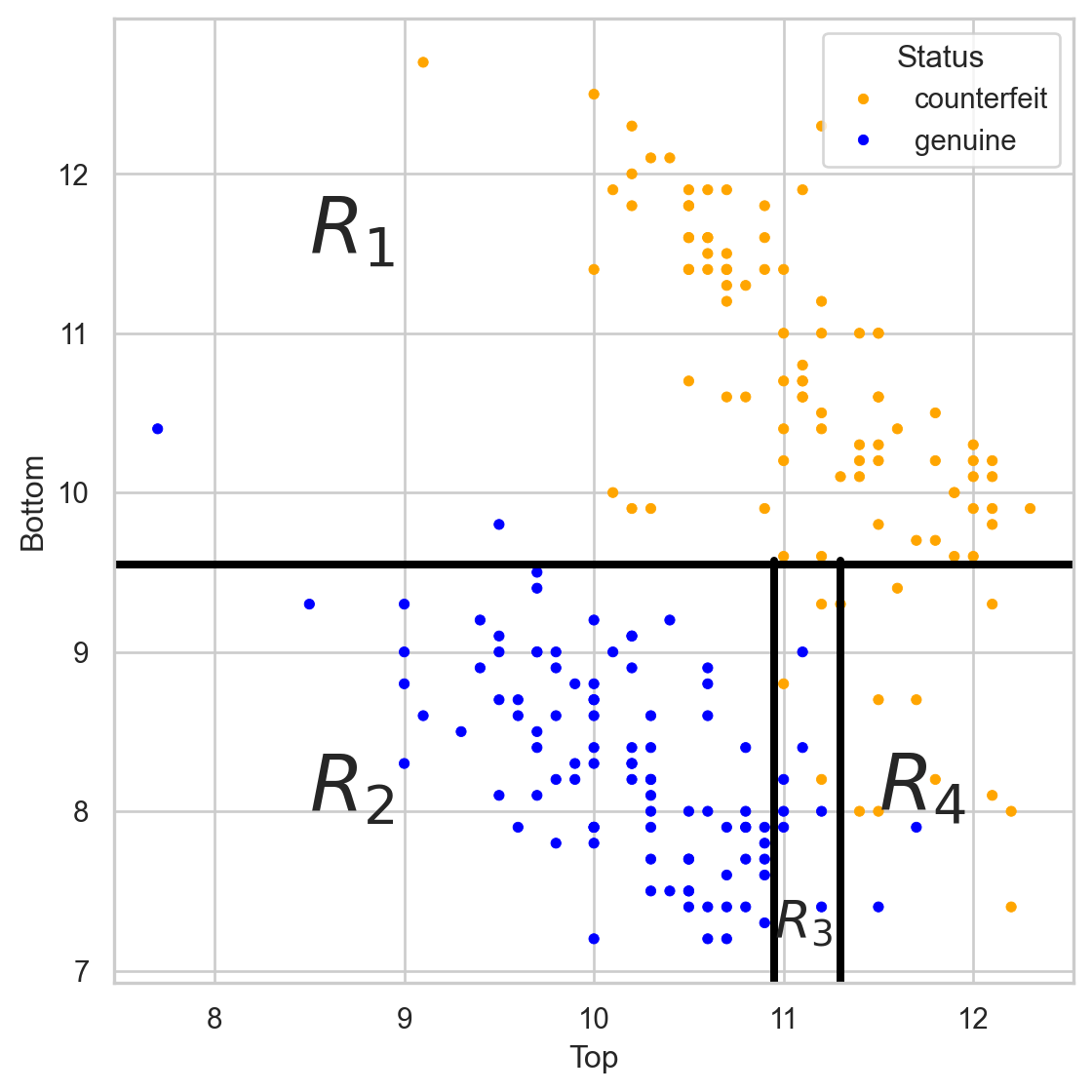

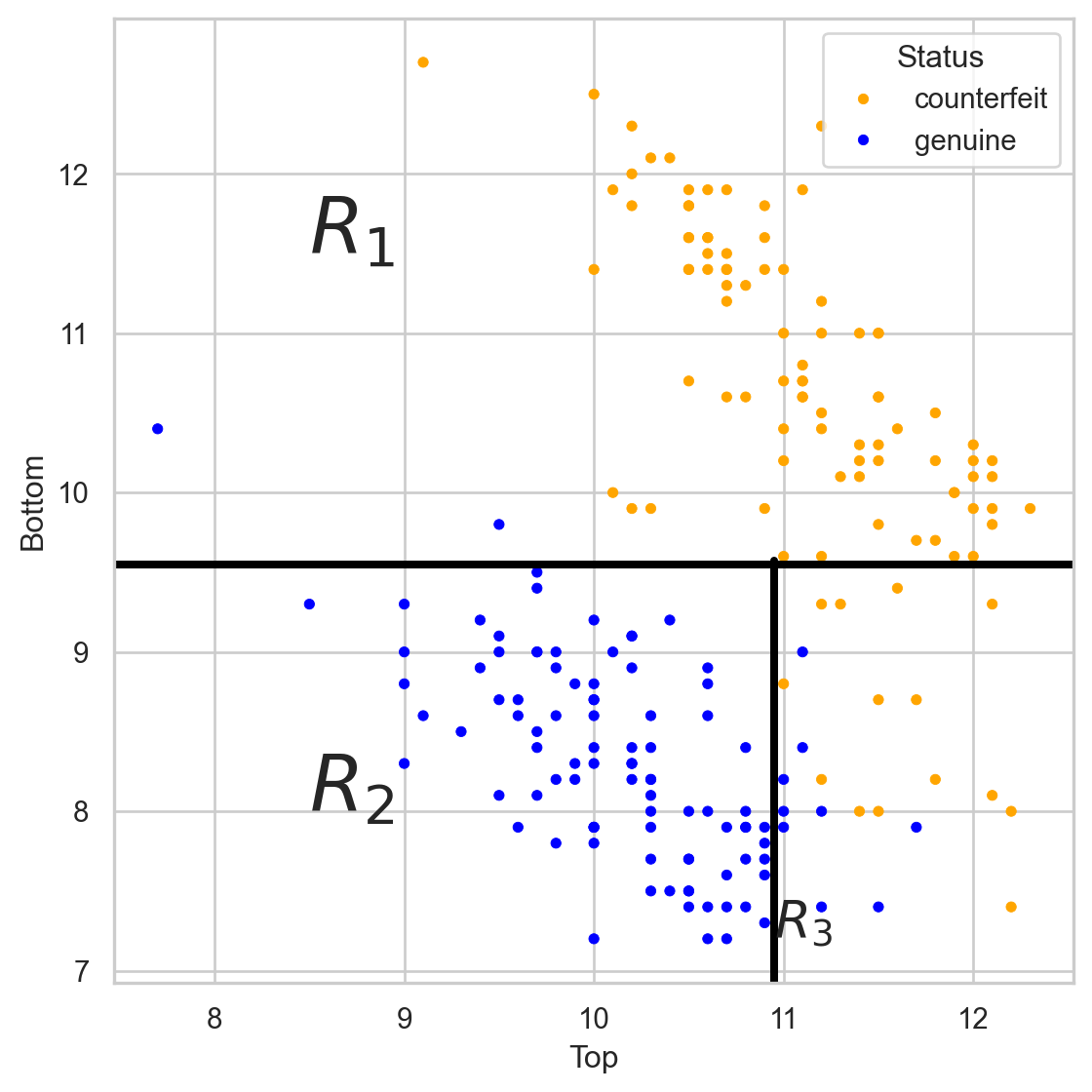

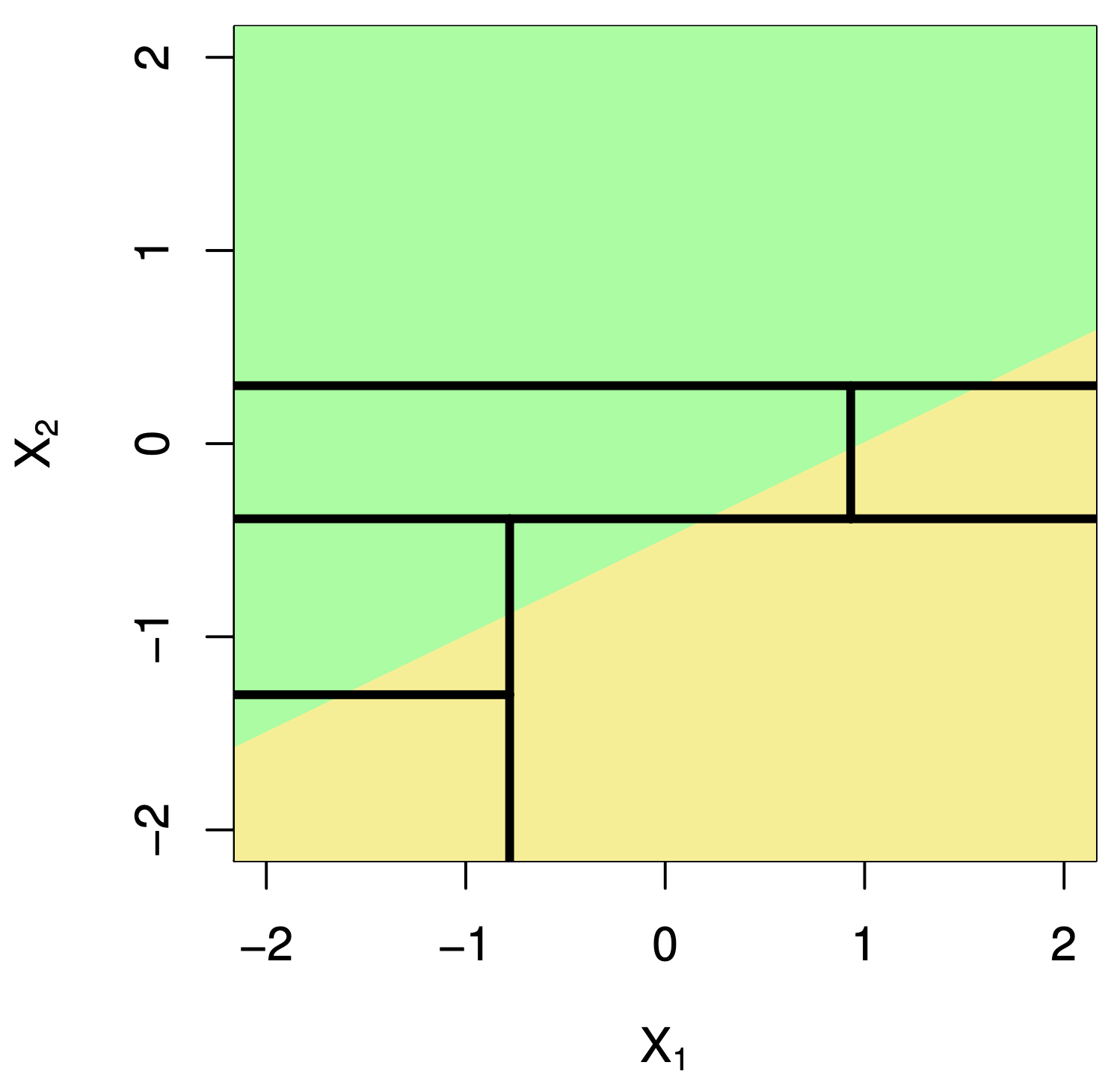

Basic idea of a decision tree

Stratify or segment the predictor space into several simpler regions.

How do you build a decision tree?

Building decision trees involves two main procedures:

Grow a large tree.

Prune the tree to prevent overfitting.

After building a “good” tree, we can predict new observations that are not in the data set we used to build it.

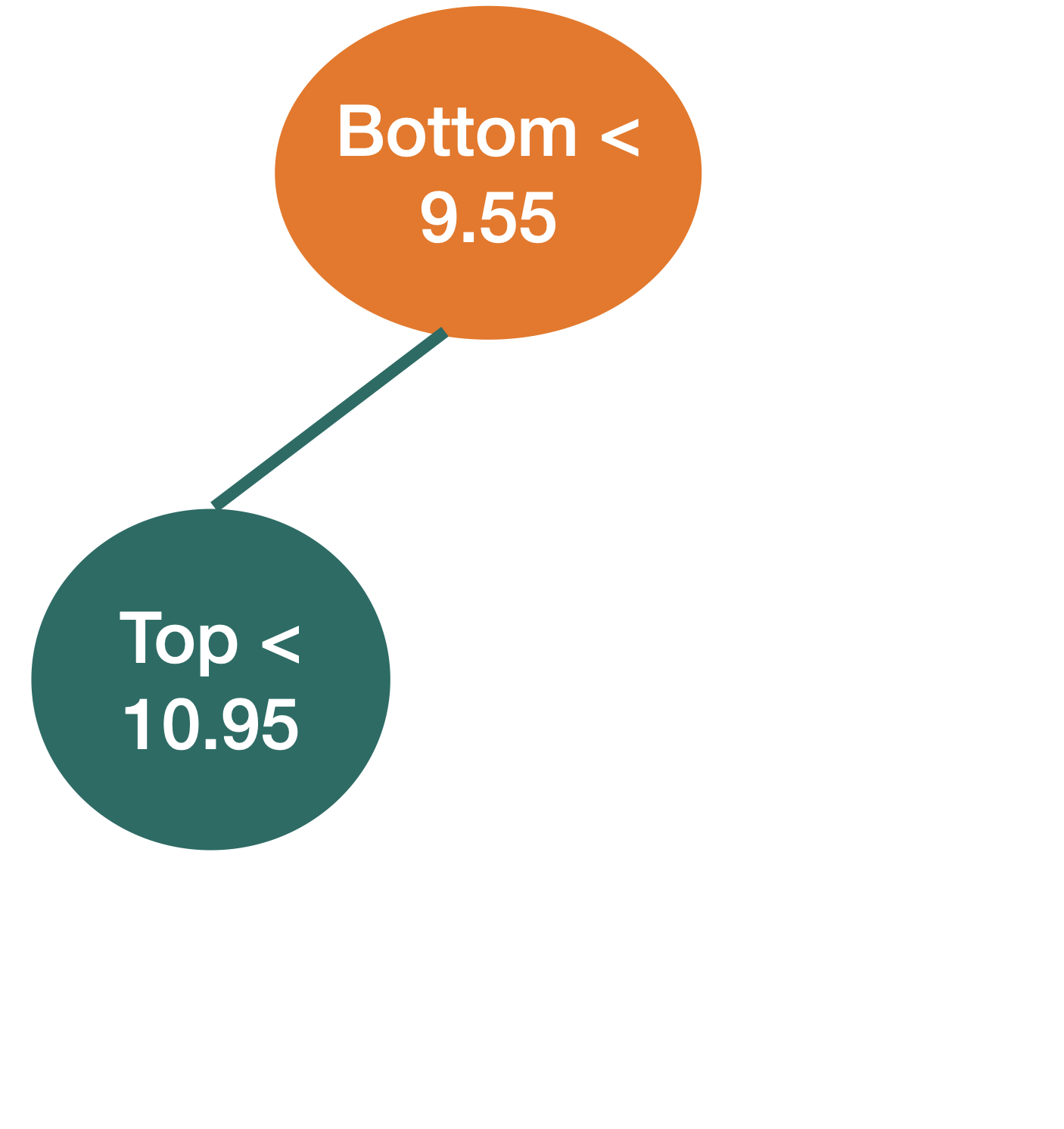

How do we grow a tree?

Using the CART algorithm!

The algorithm uses a recursive binary splitting strategy that builds the tree using a greedy top-down approach.

Basically, at a given node, it considers all variables and all possible splits of that variable. Then, for classification, it chooses the best variable and splits it that minimize the so-called impurity.

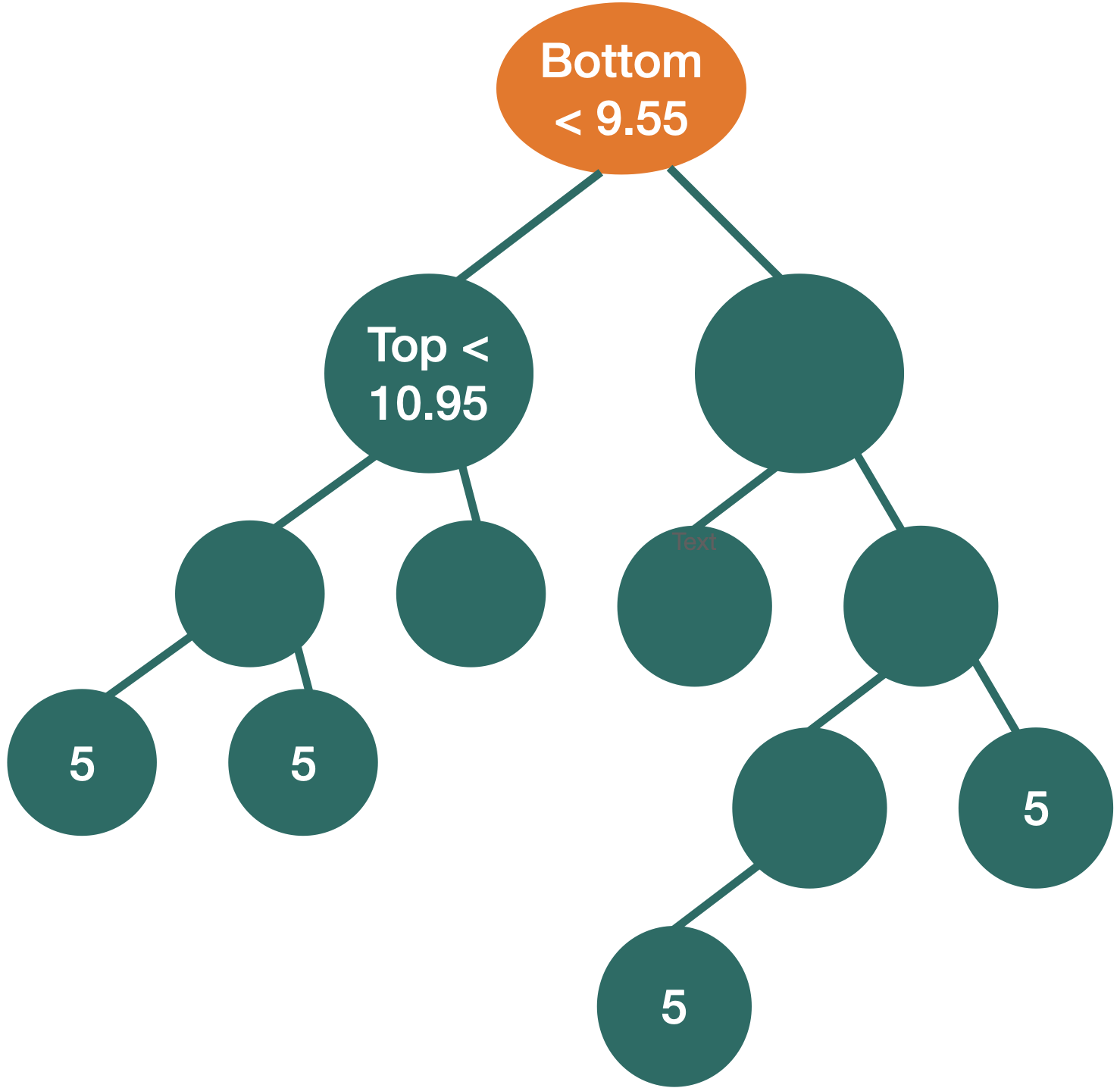

We repeat the partitioning process until:

- impurity does not improve in any of the terminal nodes, or

- each terminal node has no less than, say, 5 observations.

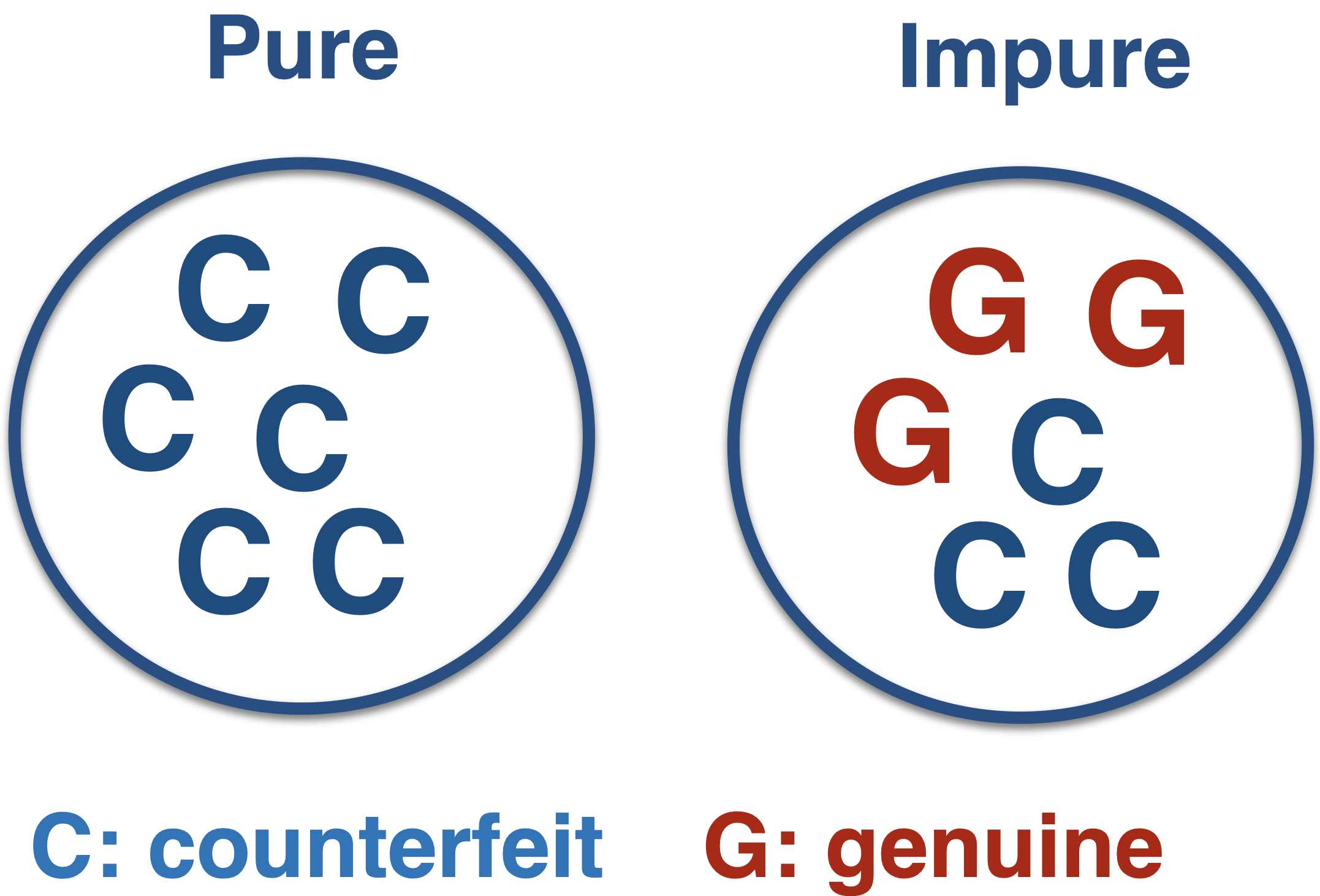

What is impurity?

Node impurity refers to the homogeneity of the response classes at that node.

The CART algorithm minimizes impurity between tree nodes.

How do we measure impurity?

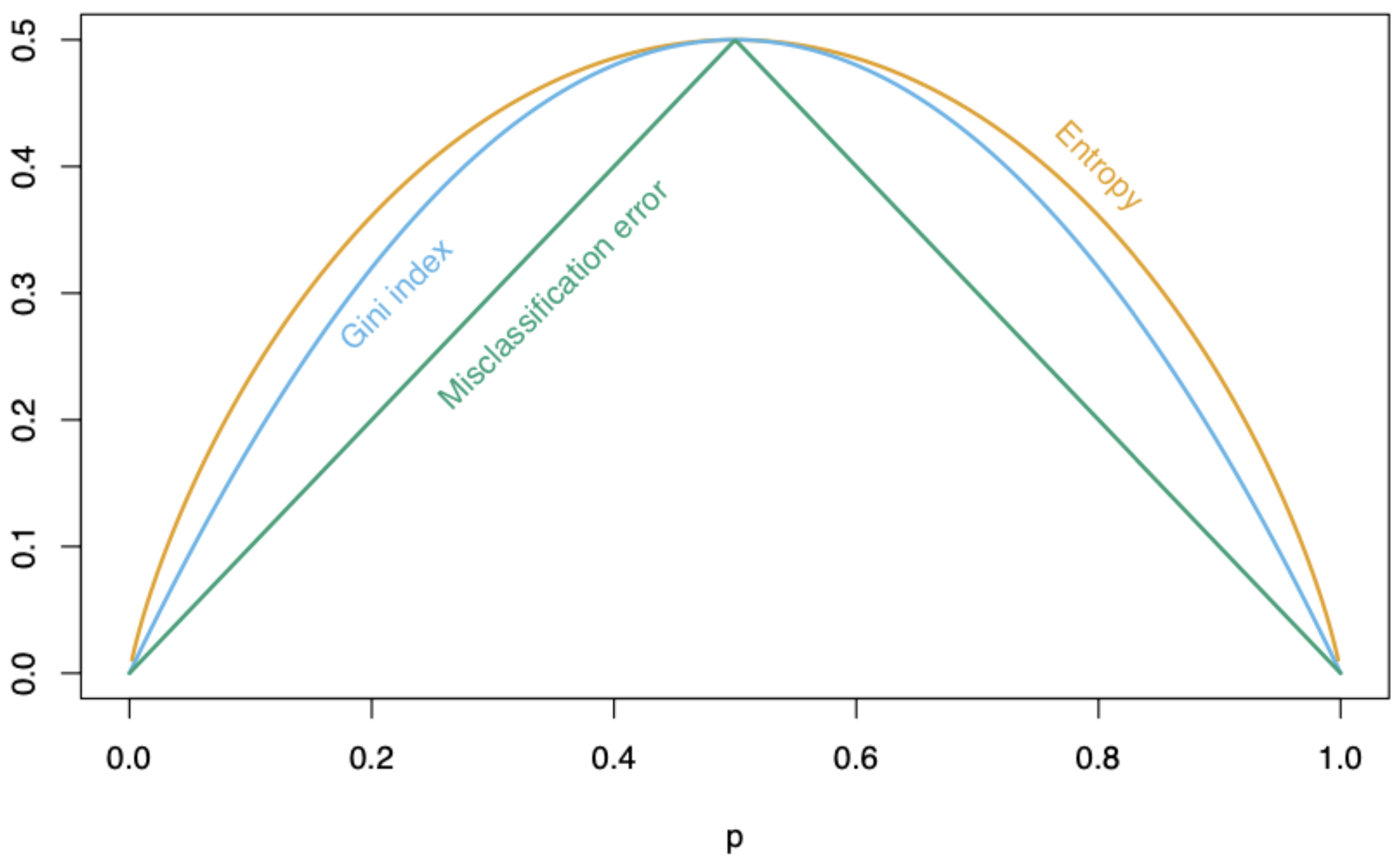

There are three different metrics for impurity:

Misclassification risk.

Cross entropy.

Gini impurity index.

p: Proportion of elements in the target class

Mathematically

Let \(p_1\) and \(p_2\) be the proportion of observations in the target and reference class, respectively, in a node.

Misclassification risk: \(1 - \max\{p_1, p_2\}\)

Cross entropy: \(- (p_1 \log(p_1) + p_2 \log(p_2))\)

Gini impurity index: \(p_1(1 - p_1) + p_2(1 - p_2)\)

In Python

In Python, we use the DecisionTreeClassifier() and fit() functions from scikit-learn to train a classification tree.

The parameter min_samples_leaf controls the minimum number of observations in a terminal node, and the cc_alpha controls the tree complexity (to be described later). The parameter random_state allows you to reproduce the same tree in different runs of the Python code.

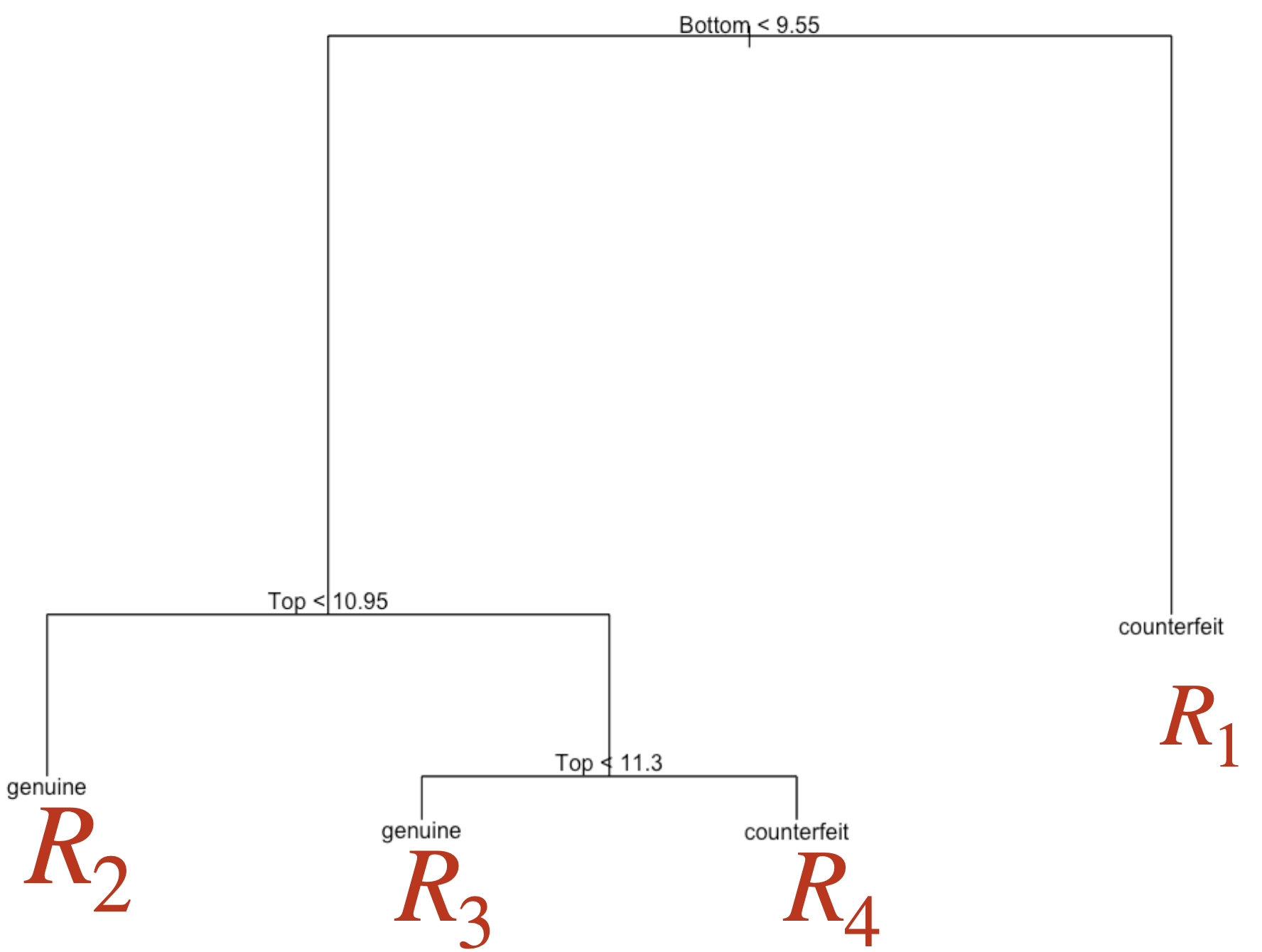

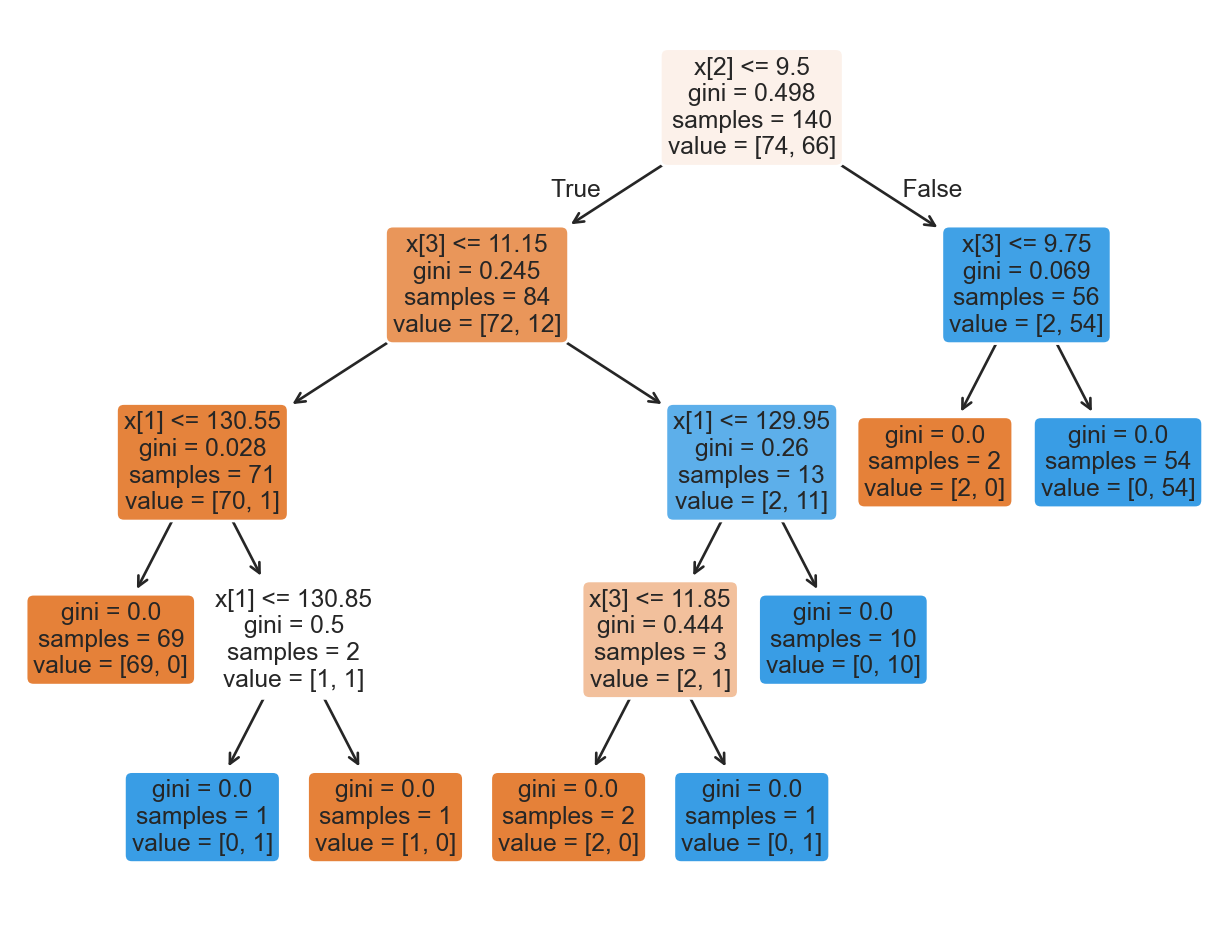

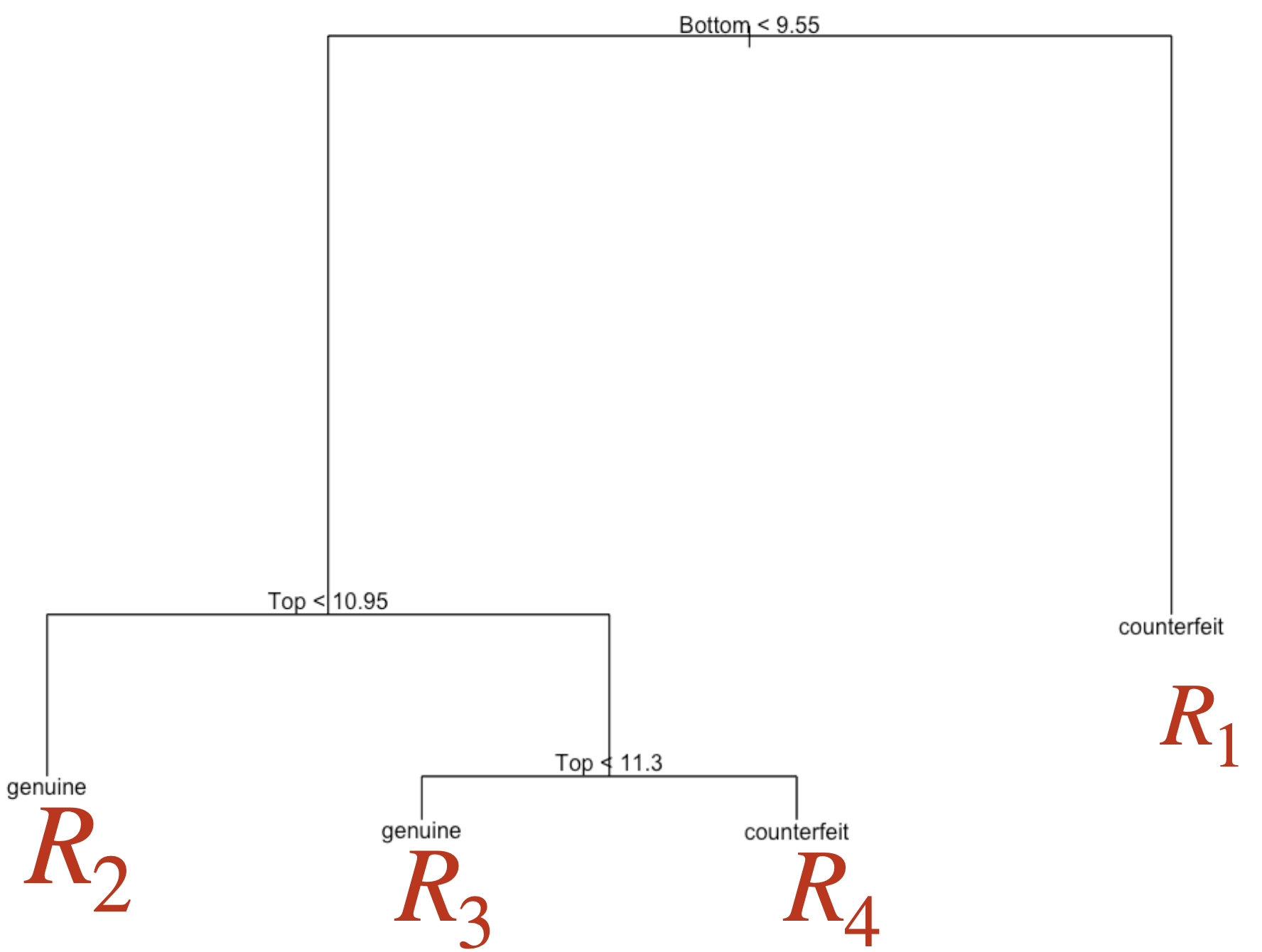

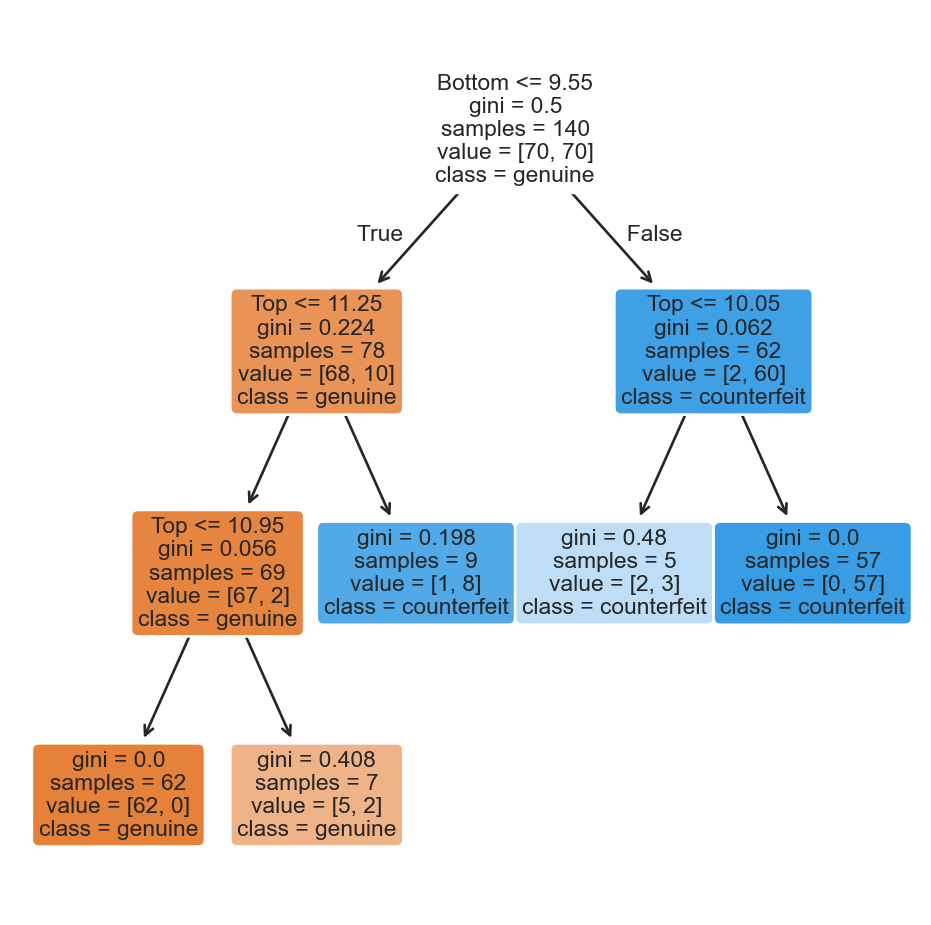

Plotting the tree

To see the decision tree, we use the plot_tree function from scikit-learn and some commands from matplotlib. Specifically, we use the plt.figure() functions to define the size of the figure and plt.show() to display the figure.

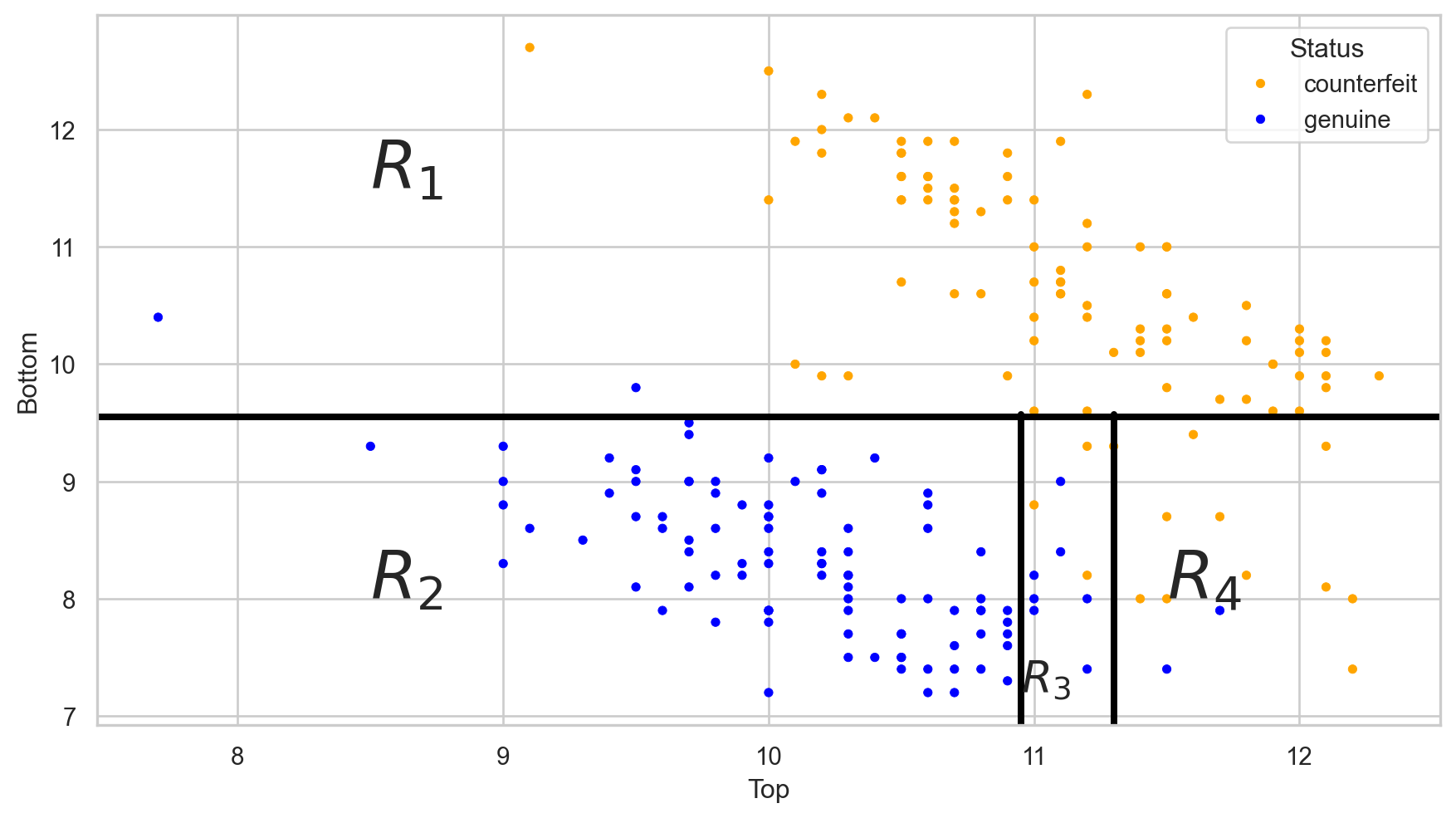

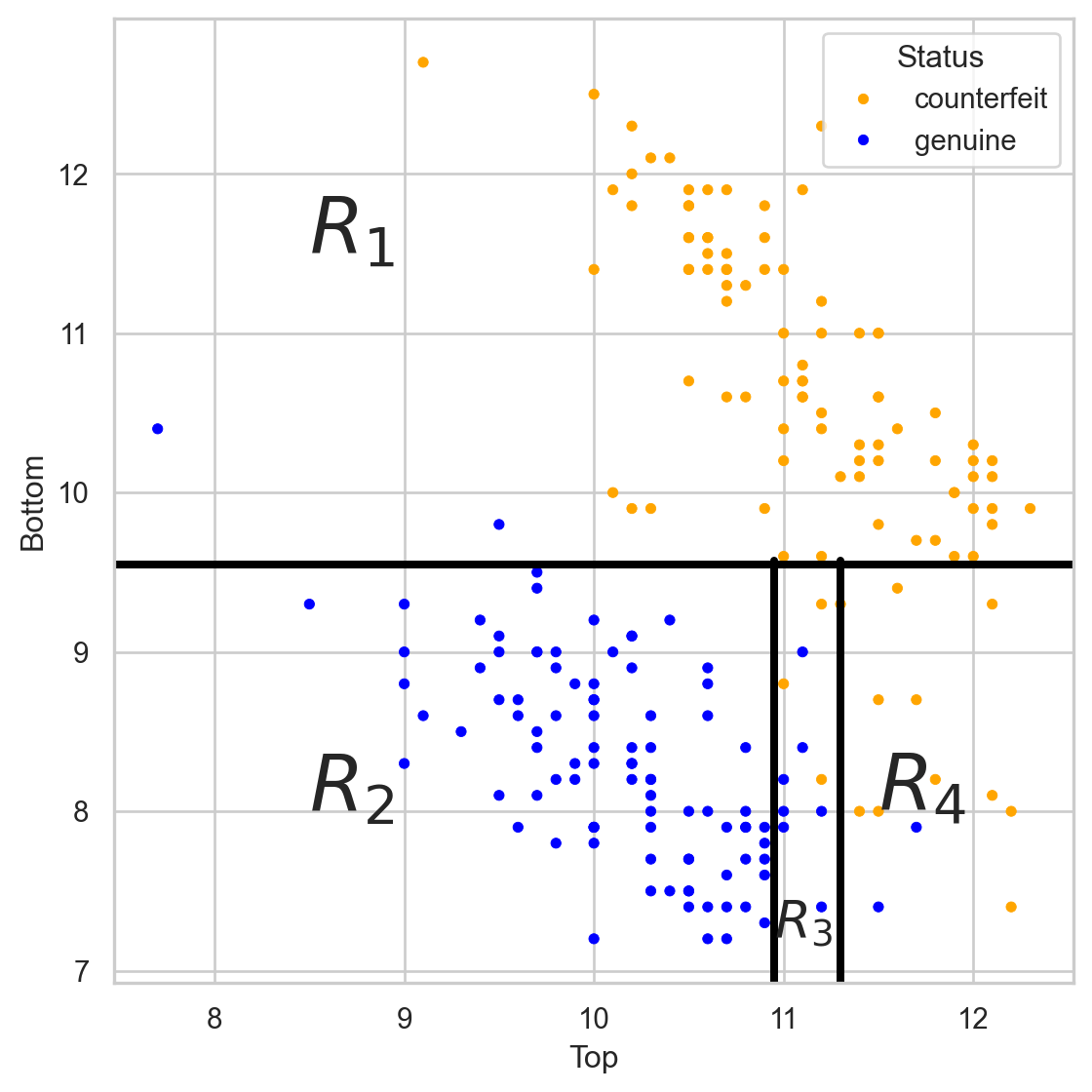

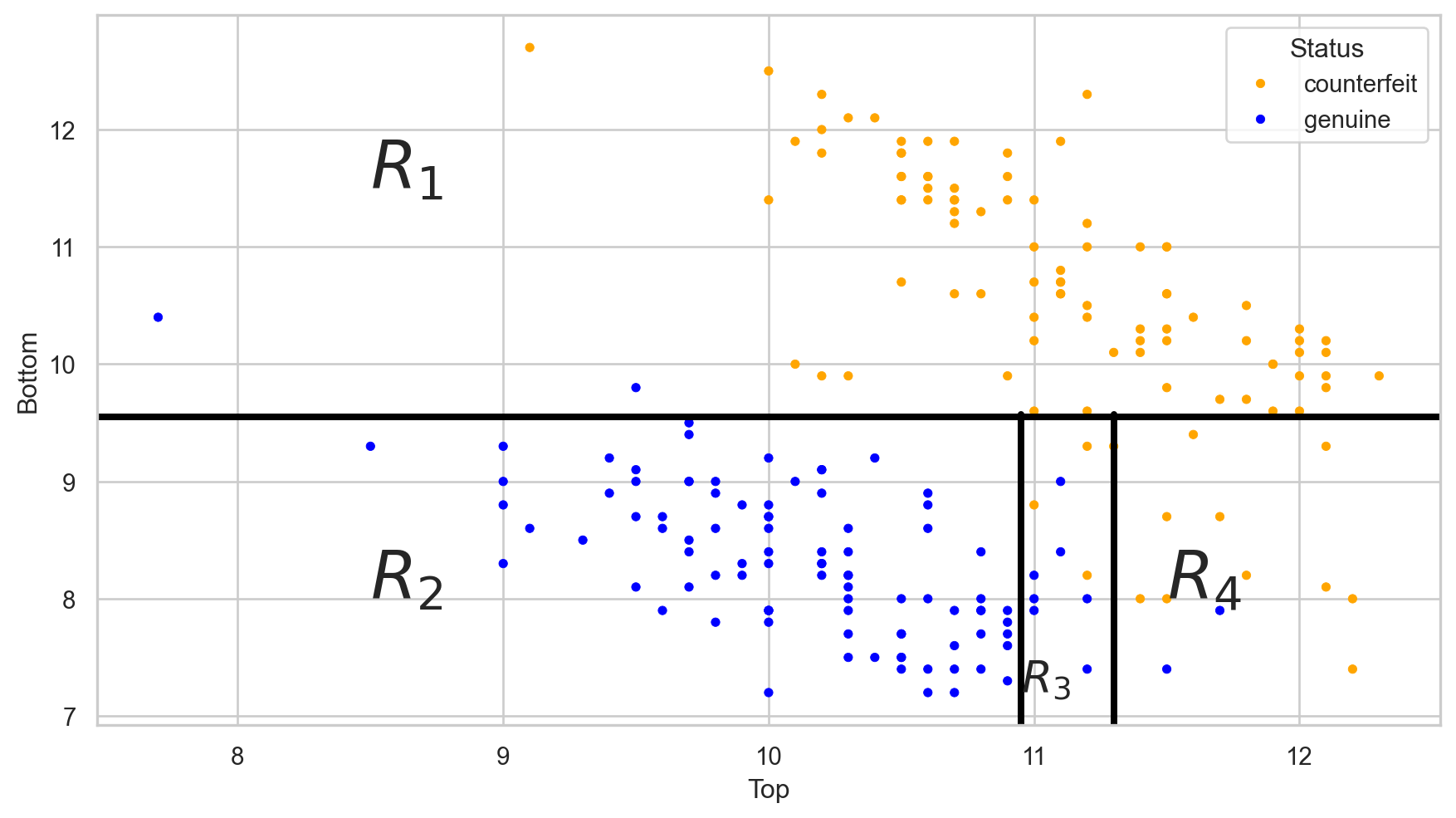

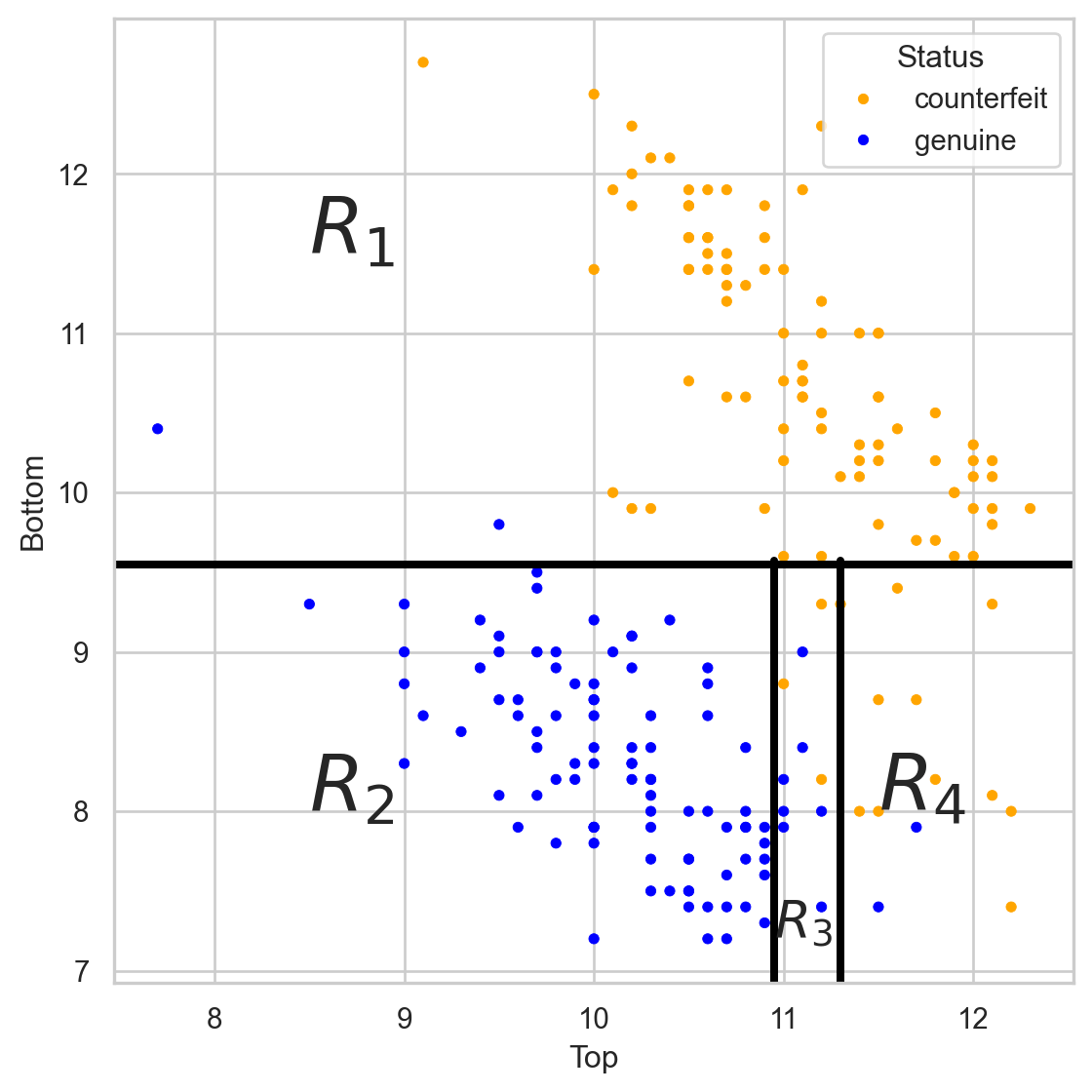

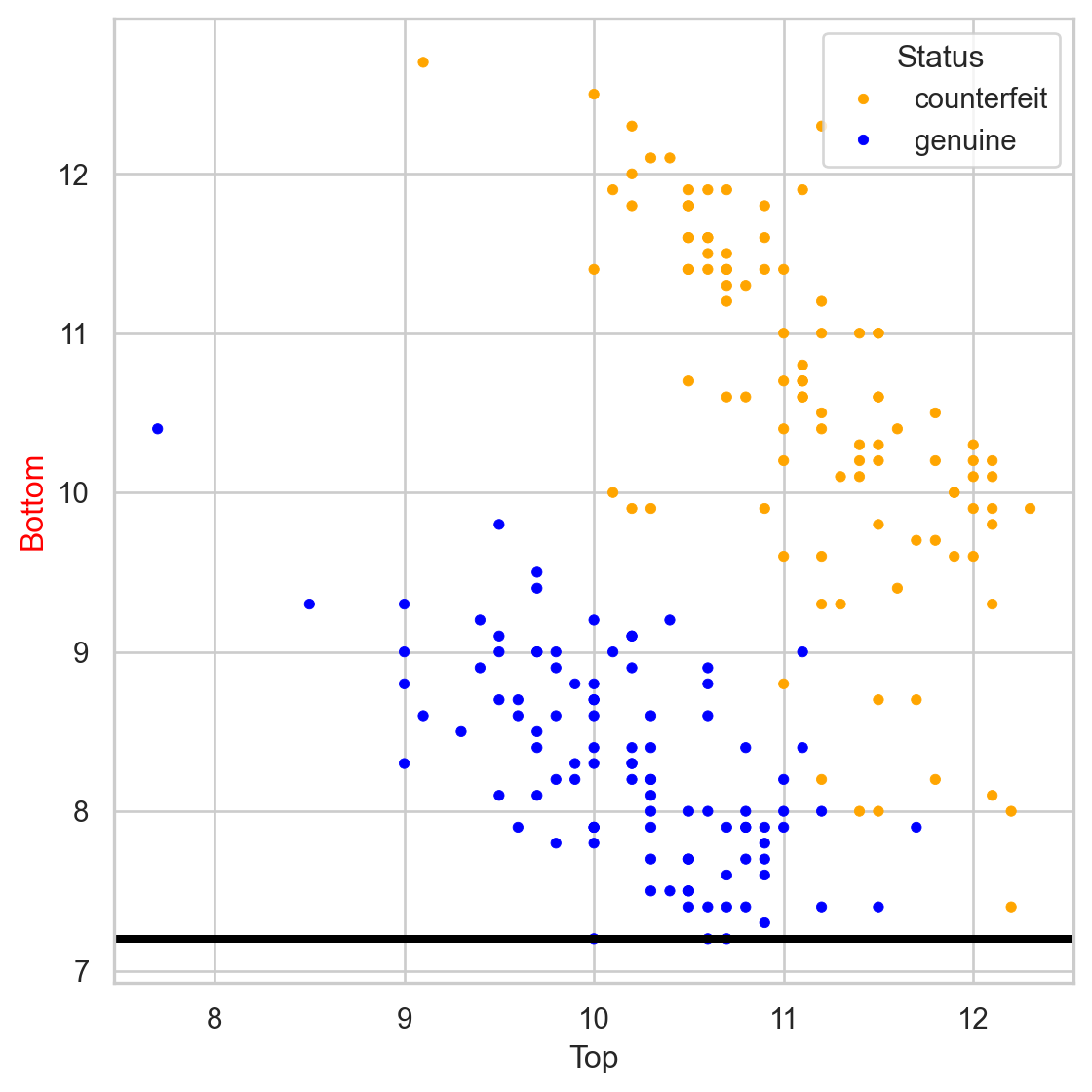

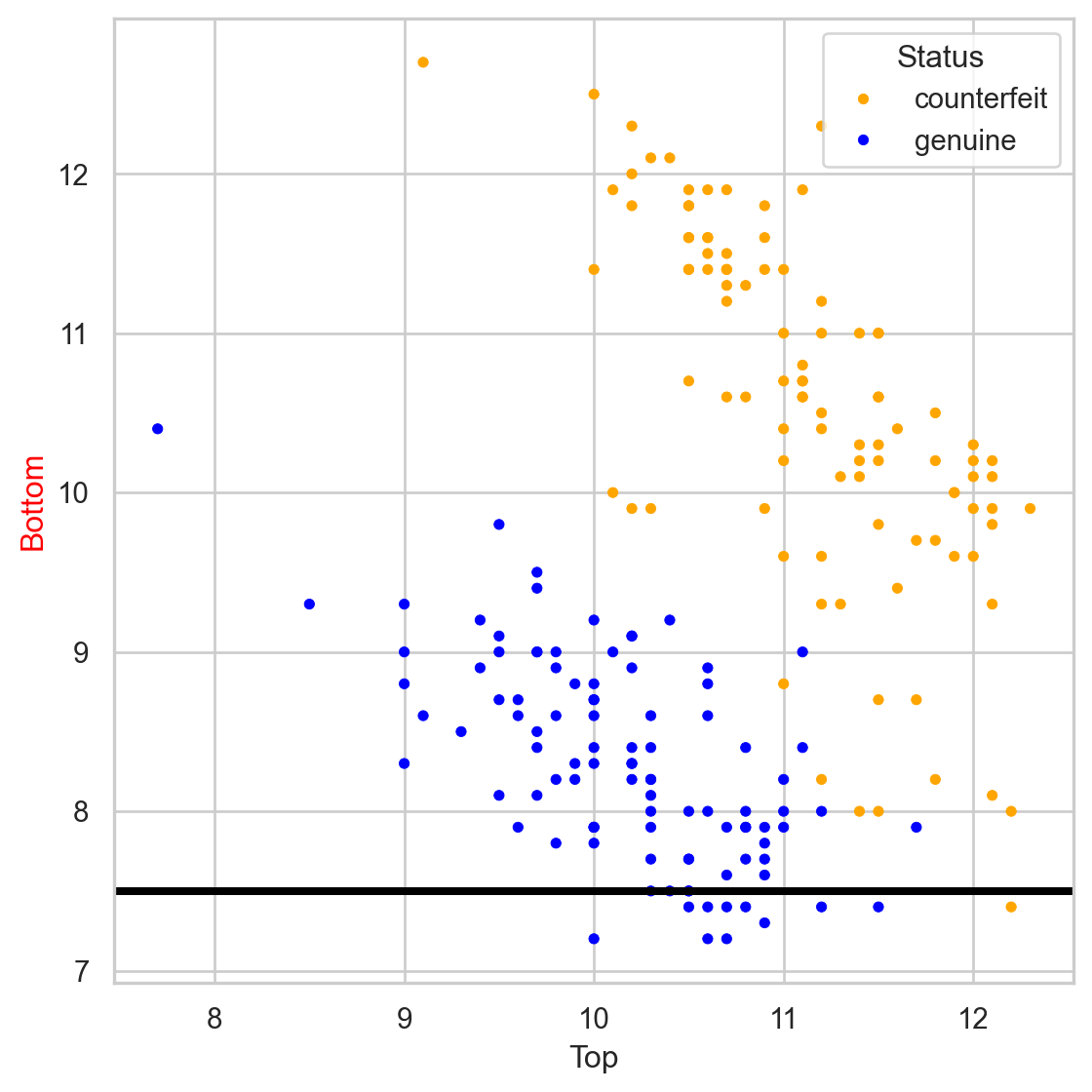

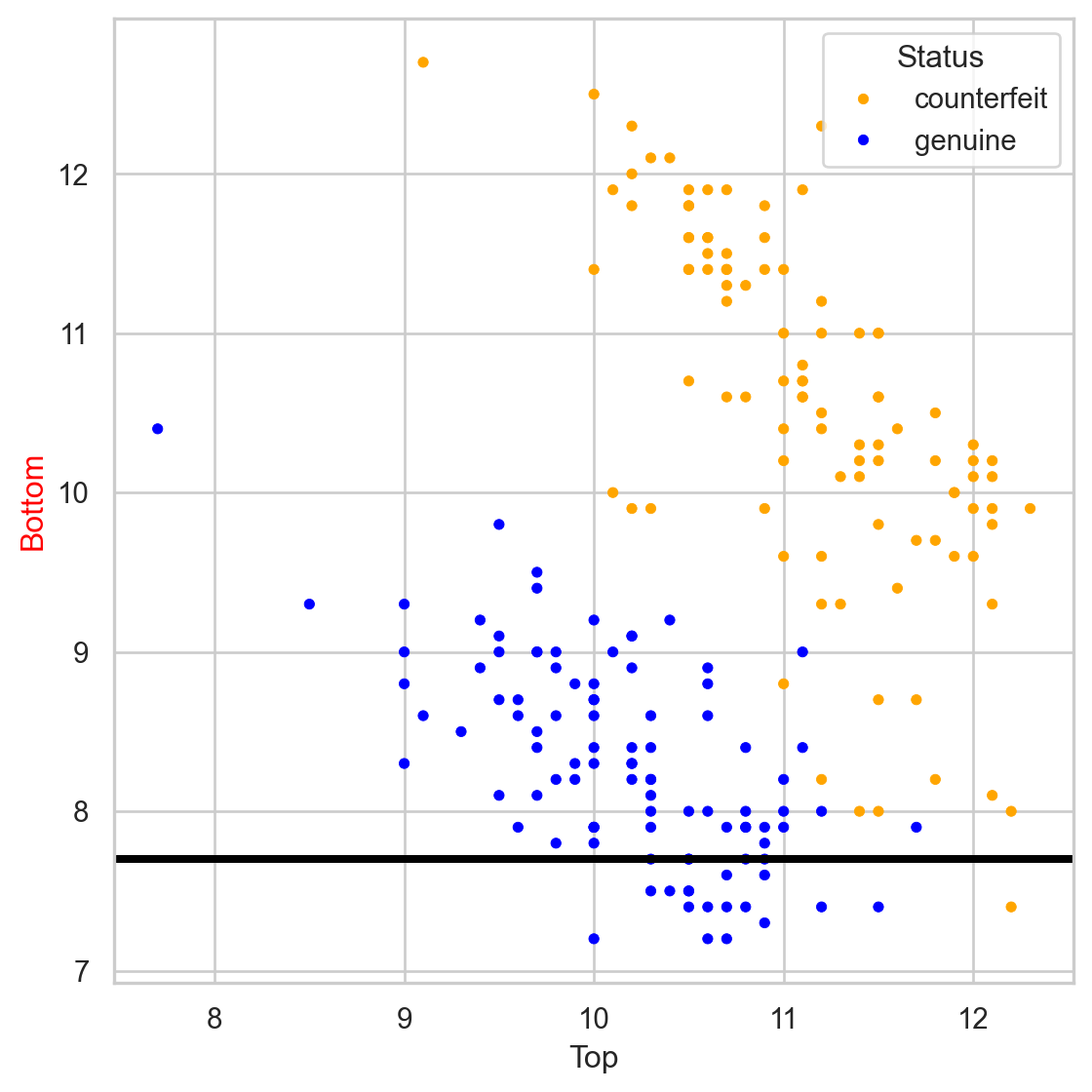

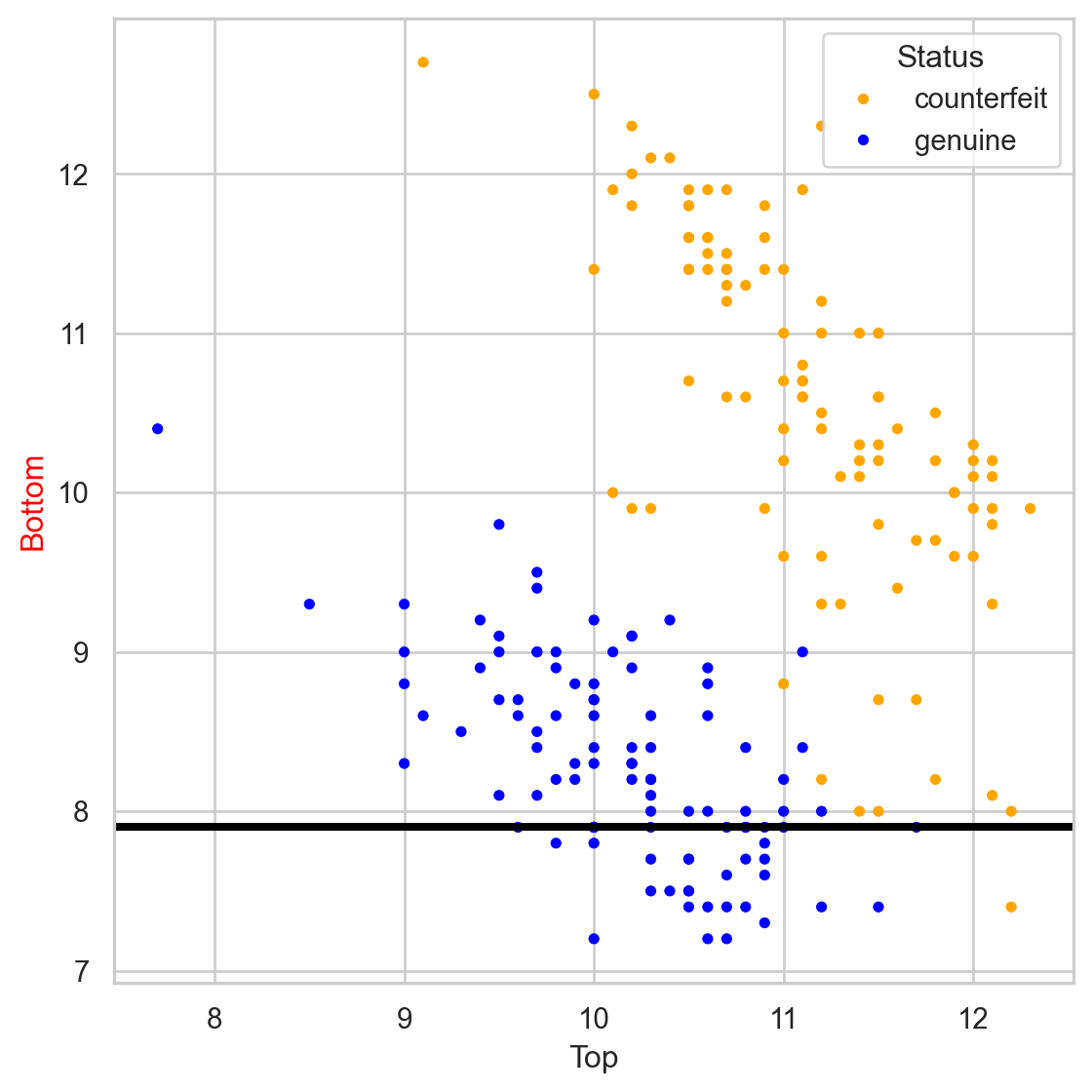

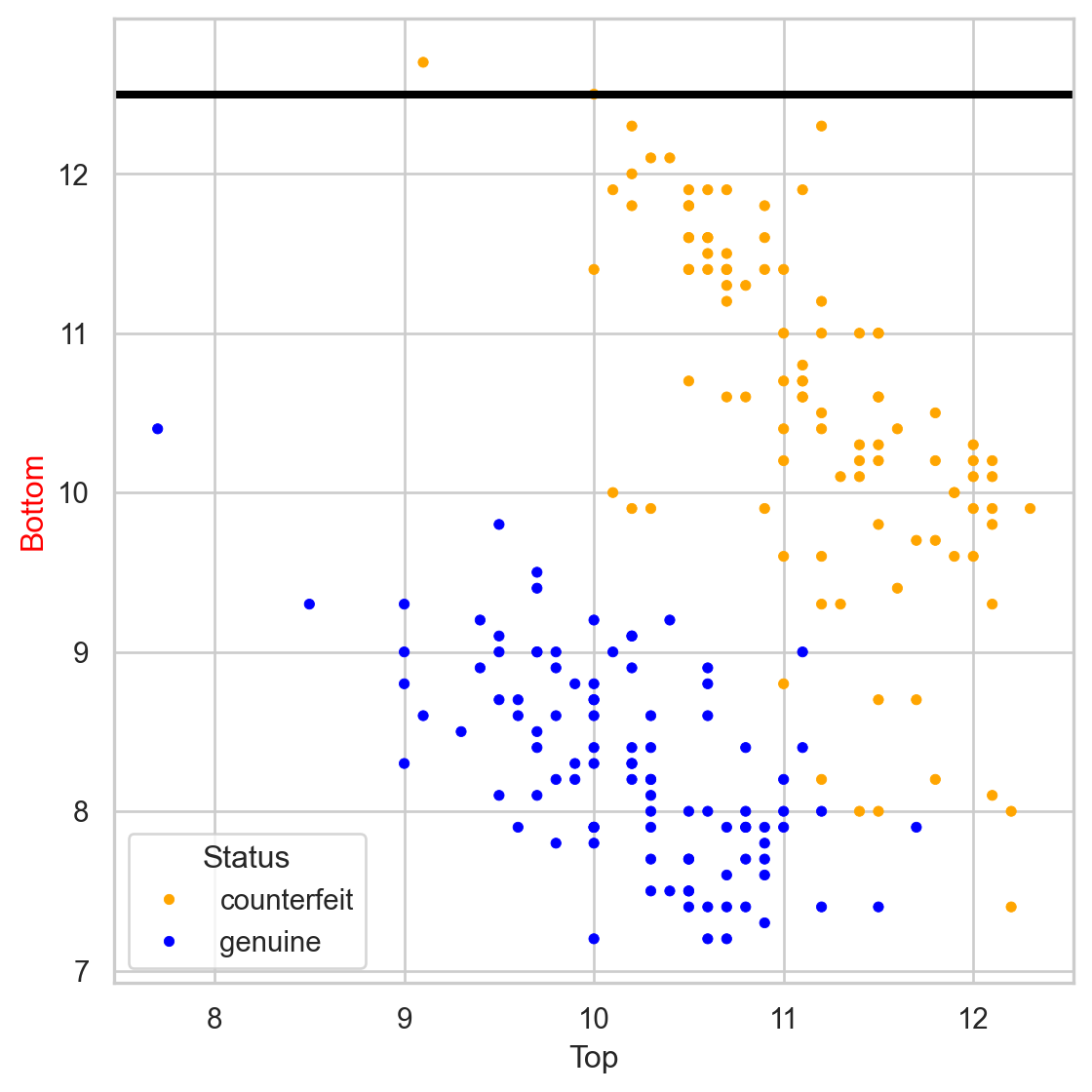

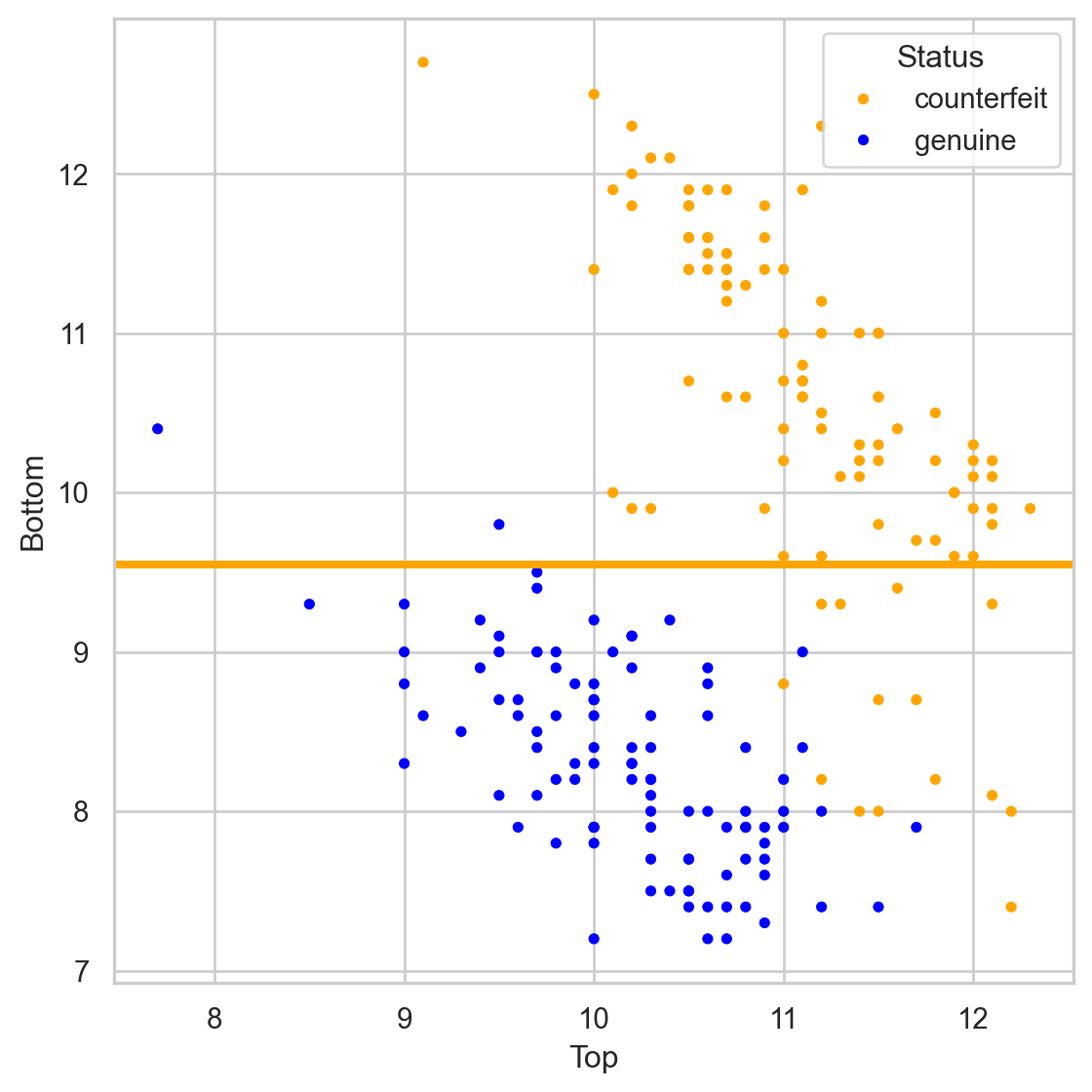

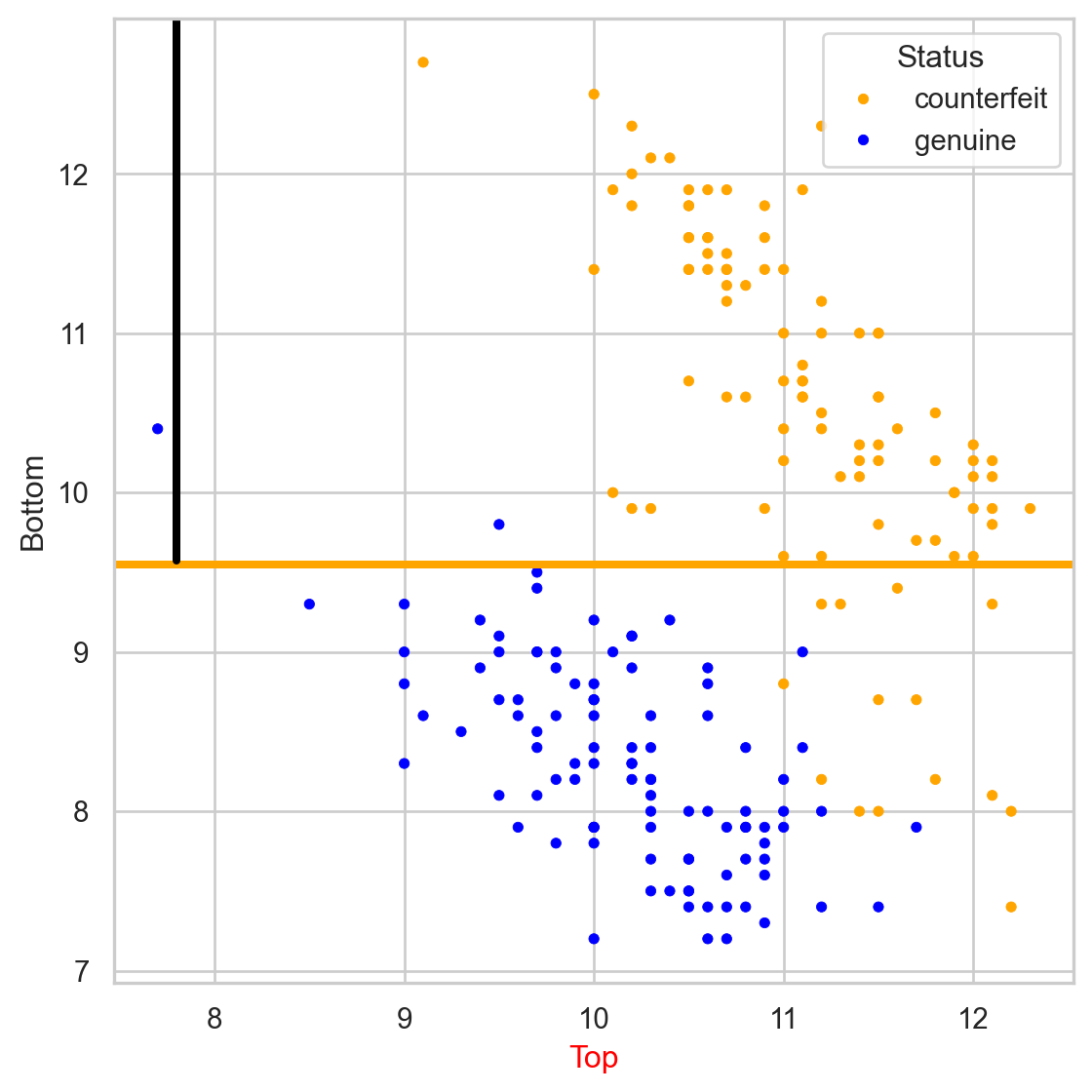

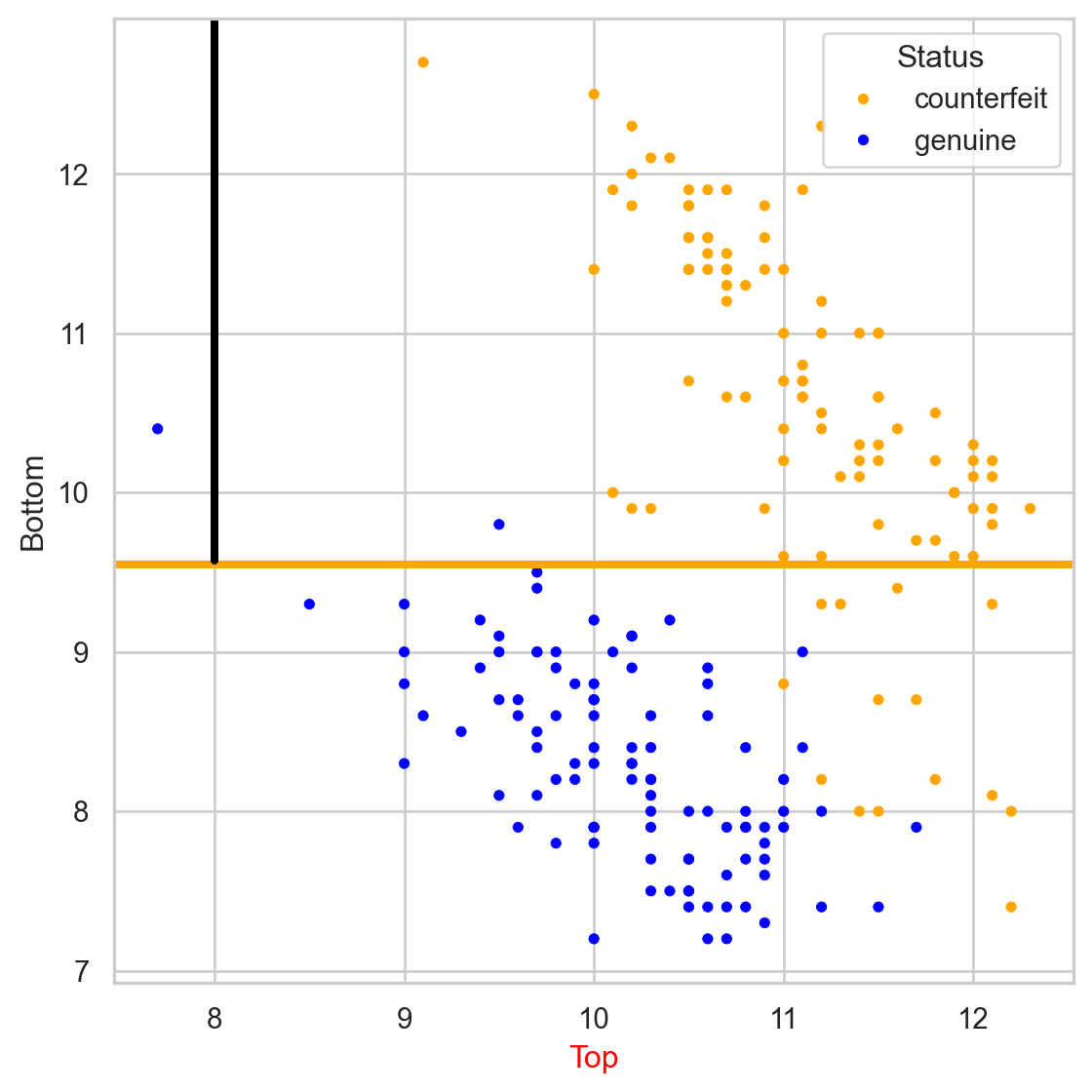

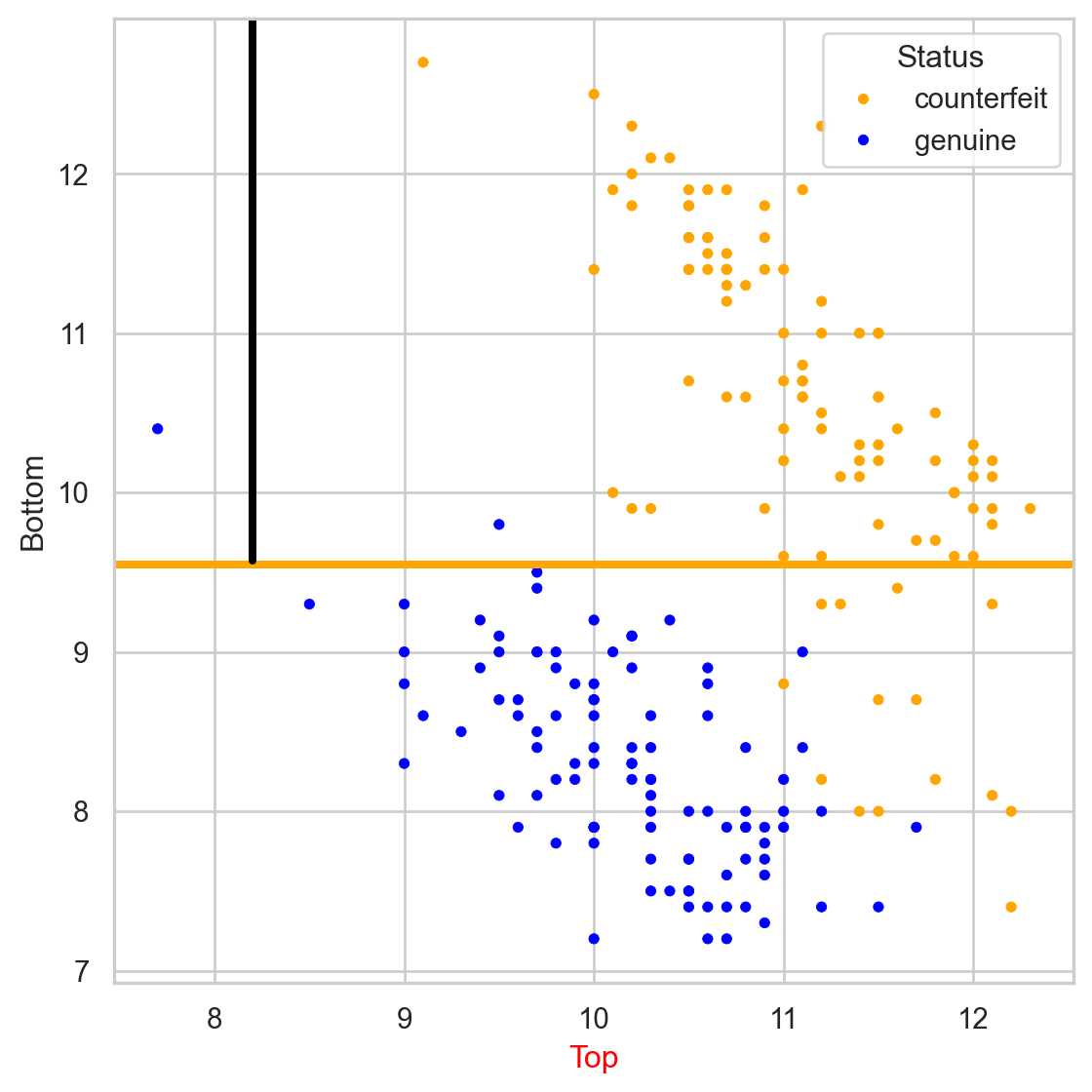

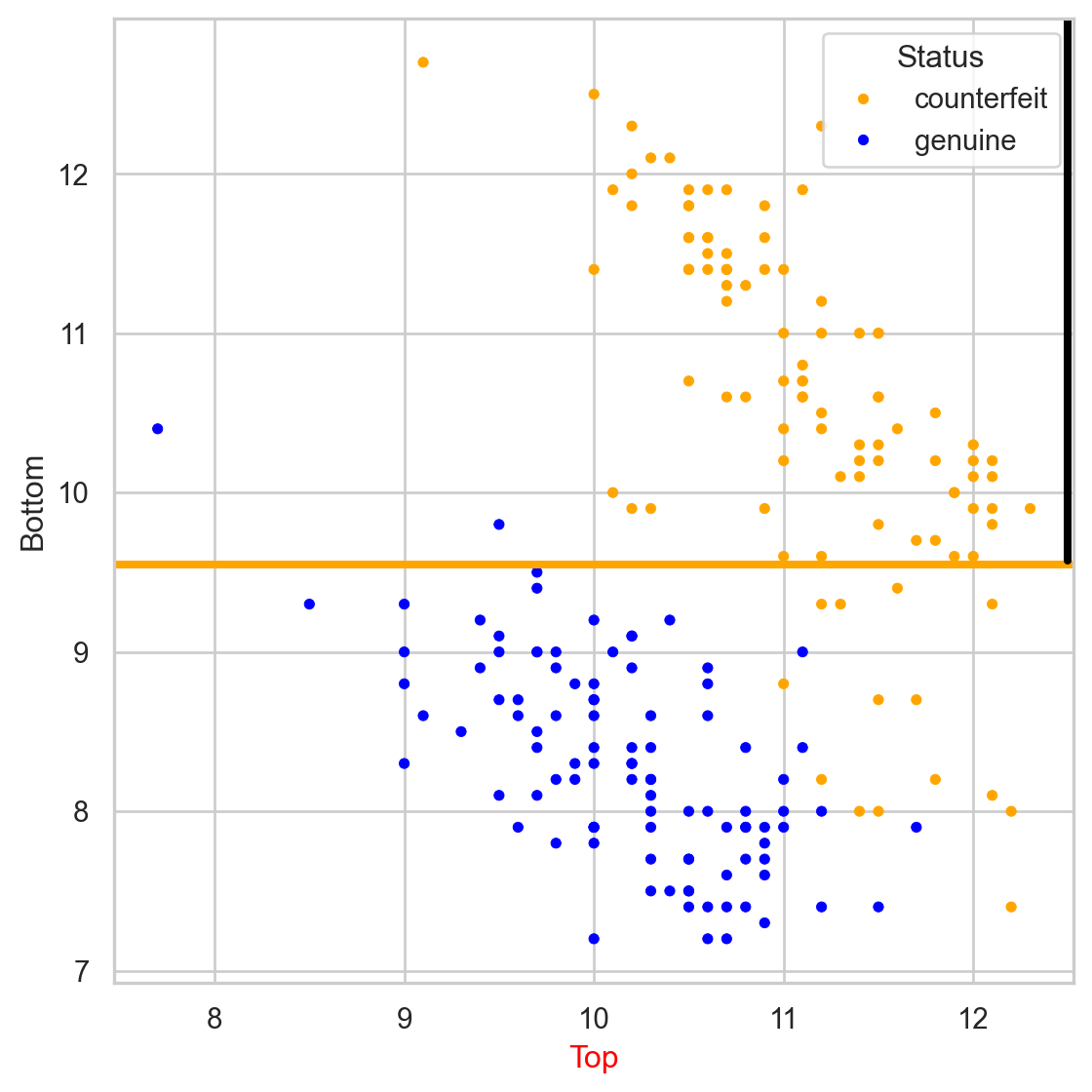

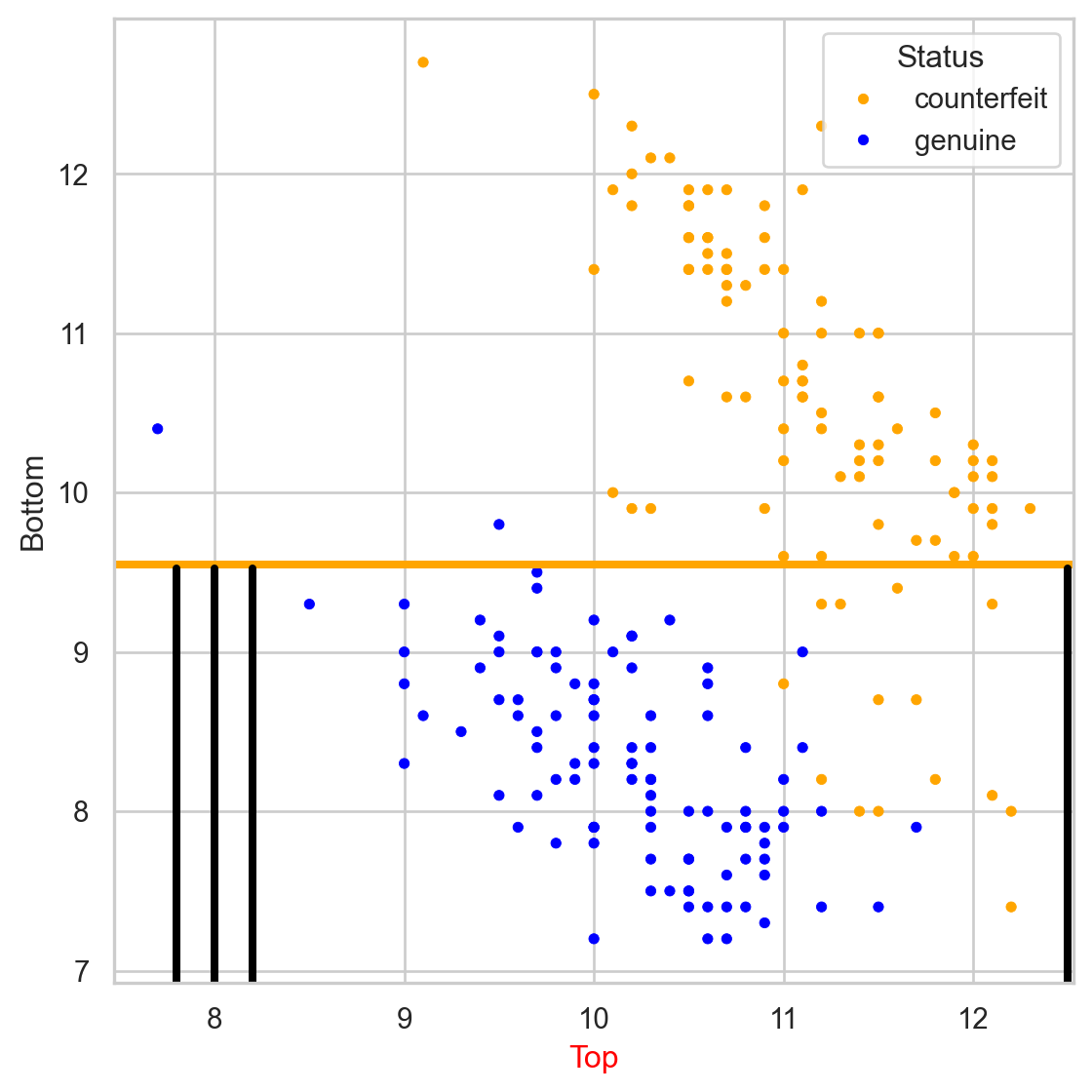

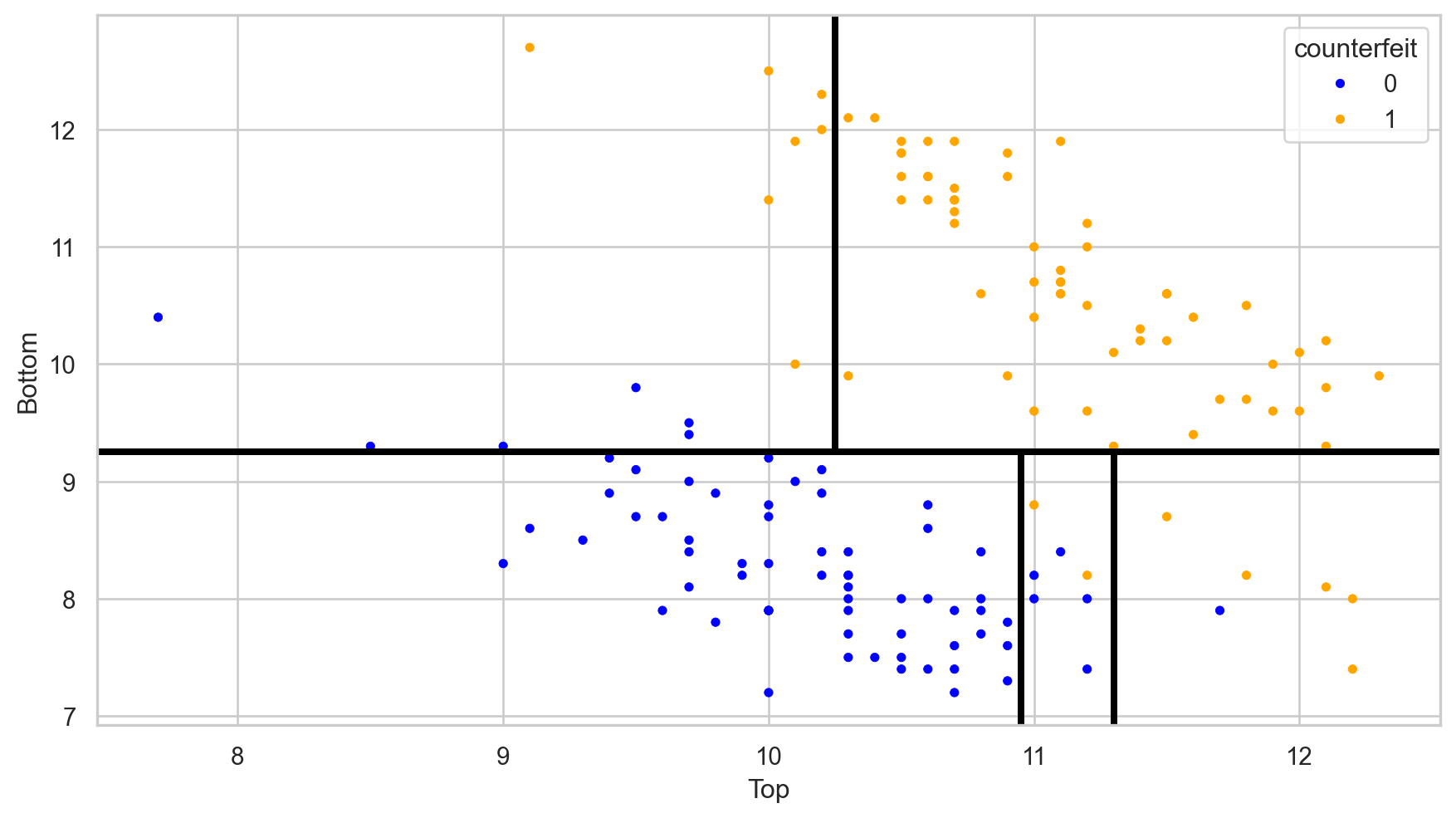

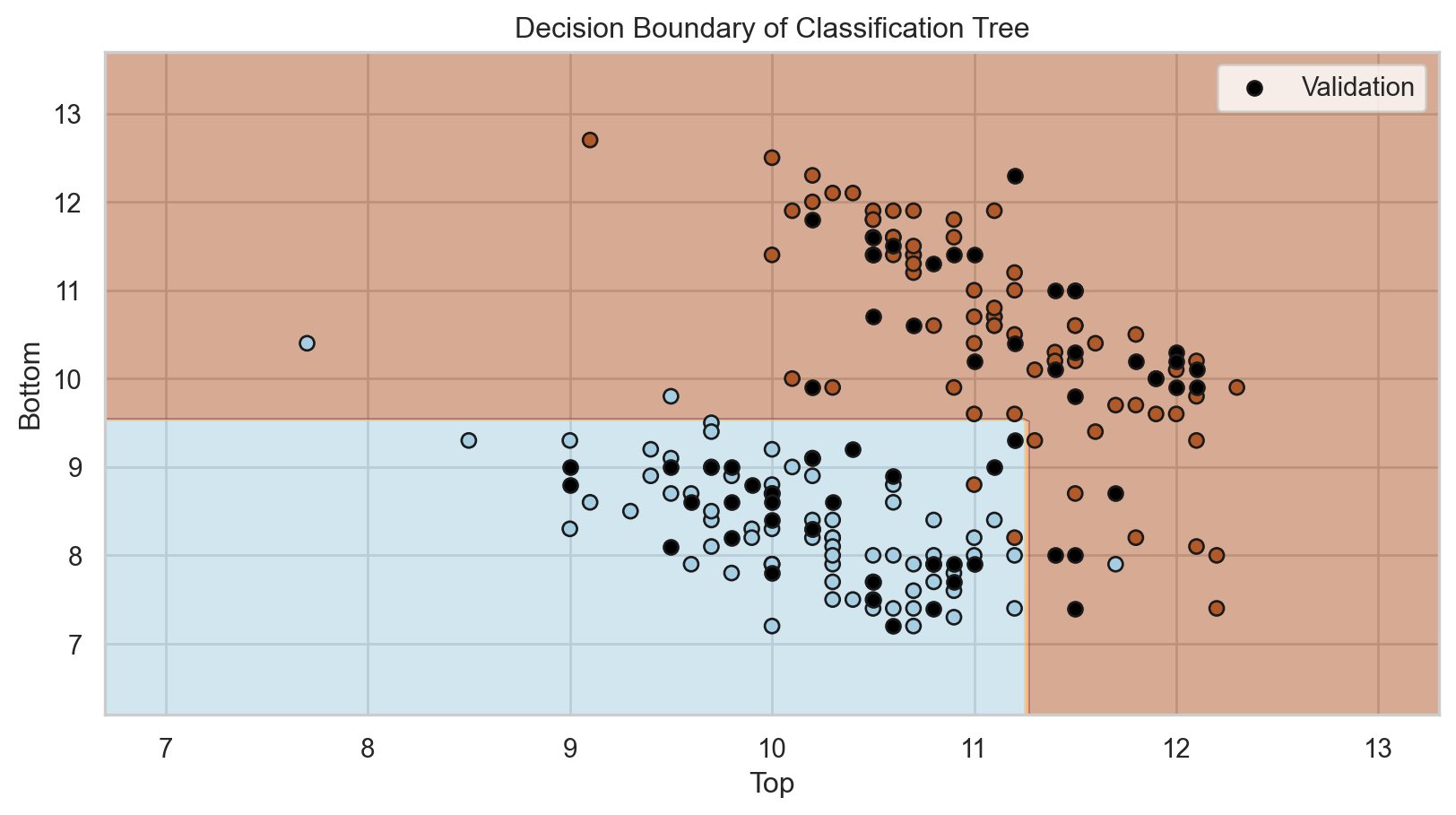

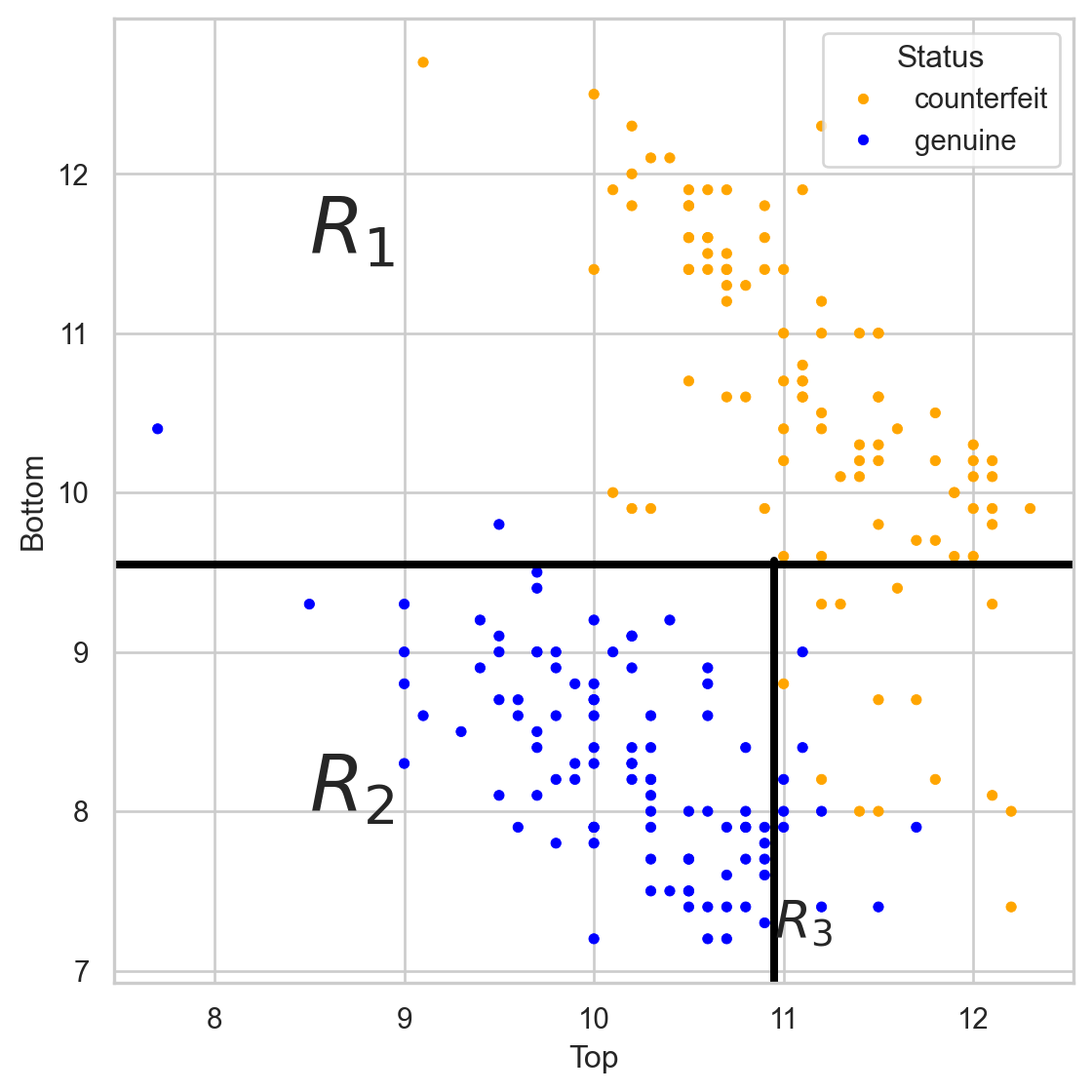

The tree in the predictor space

Estimated conditional probabilities

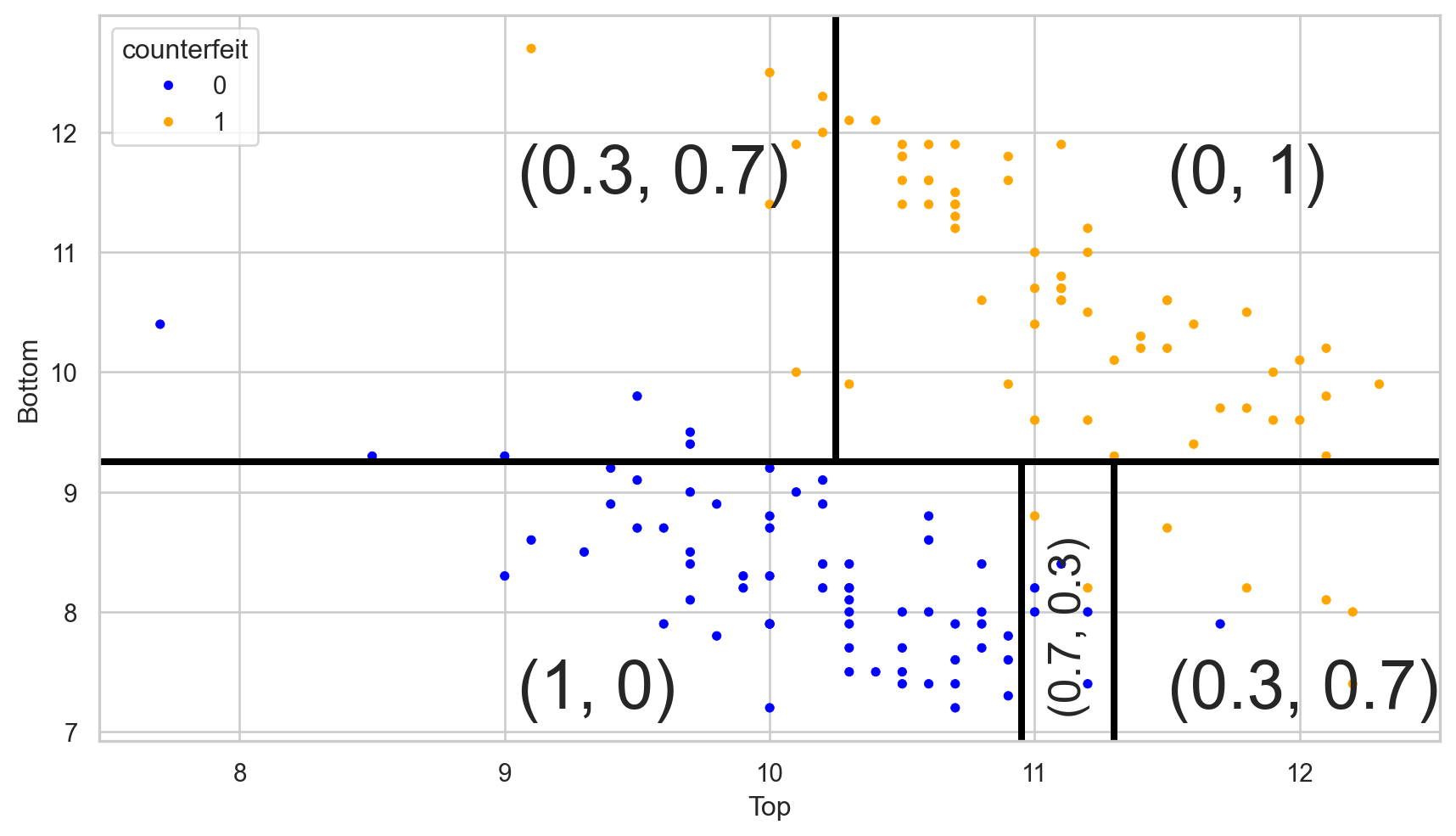

After training a classification tree, we can calculate the estimated conditional probability \(\hat{P}(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\).

To this end, let

- \(\hat{p}_1(\boldsymbol{x}) = \hat{P}(Y = 1 | \boldsymbol{X} = \boldsymbol{x})\)

- \(\hat{p}_0(\boldsymbol{x}) = 1 - \hat{p}_1(\boldsymbol{x})\)

be the estimated probabilities that \(\boldsymbol{x}\) belongs to class 1 or 0.

Essentially, we calculate \(\hat{p}_1(\boldsymbol{x})\) and \(\hat{p}_0(\boldsymbol{x})\) as follows:

- Select the region or terminal node where \(\boldsymbol{x}\) belongs.

- \(\hat{p}_1(\boldsymbol{x})\) is the proportion of observations in that terminal node that belong to class 1. \(\hat{p}_0(\boldsymbol{x})\) is the proportion of observations that belong to class 0.

Visually

Let \((\hat{p}_0(\boldsymbol{x}), \hat{p}_1(\boldsymbol{x}))\) be the estimated probabilities.

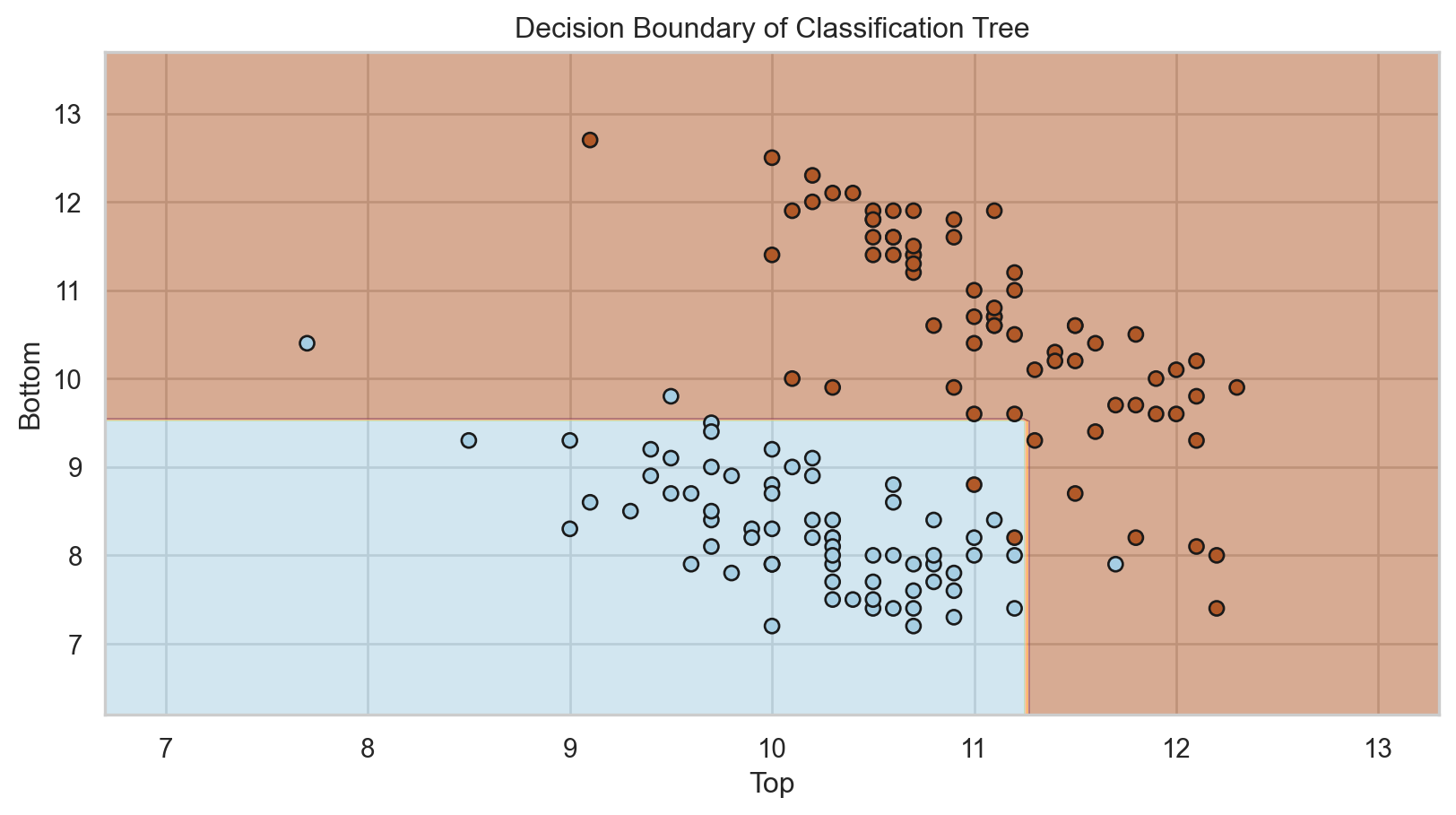

Estimated Bayes Classifier

Once we have estimated the conditional probability using the classification tree, we plug it into the Bayes classifier to have our approximated function:

\[\hat{C}(\boldsymbol{x}) = \begin{cases} 1, & \text{if}\ \hat{p}_1(\boldsymbol{x}) > 0.5 \\ 0, & \text{if}\ \hat{p}_1(\boldsymbol{x}) \leq 0.5 \end{cases},\]

where \(\hat{p}_1(\boldsymbol{x})\) depends on the region or terminal node \(\boldsymbol{x}\) falls in.

Simplified decision boundary

Implementation details

Categorical predictors with unordered levels \(\{A, B, C\}\). We order the levels in a specific way (works for binary and regression problems).

Predictors with missing values. For quantitative predictors, we use multiple imputation. For categorical predictors, we create a new “NA” level.

Tertiary or quartary splits. There is not much improvement.

Diagonal splits (using a linear combination for partitioning). These can lead to improvement, but they impair interpretability.

Classification Metrics

Evaluation

We evaluate a classification tree by classifying observations that were not used for training.

That is, we use the classifier to predict categories in the validation data set using only the predictor values from this set.

In Python, we use the commands:

The predict() function generates actual classes to which each observation was assigned.

The predict_proba() function generates the probabilities used by the algorithm to assign the classes.

array([[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

[0. , 1. ],

[0. , 1. ],

[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[0. , 1. ],

[1. , 0. ],

[0.11111111, 0.88888889],

[0.71428571, 0.28571429],

[1. , 0. ],

[0.11111111, 0.88888889],

[0. , 1. ],

[0. , 1. ],

[0.71428571, 0.28571429],

[1. , 0. ],

[1. , 0. ],

[0. , 1. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

[1. , 0. ],

[0. , 1. ],

[1. , 0. ],

[0.11111111, 0.88888889],

[0. , 1. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

[0. , 1. ],

[1. , 0. ],

[0. , 1. ],

[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

[1. , 0. ],

[0.71428571, 0.28571429],

[1. , 0. ],

[0.11111111, 0.88888889],

[1. , 0. ],

[0. , 1. ],

[1. , 0. ],

[1. , 0. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

[0. , 1. ],

[0. , 1. ],

[1. , 0. ],

[0. , 1. ],

[0. , 1. ],

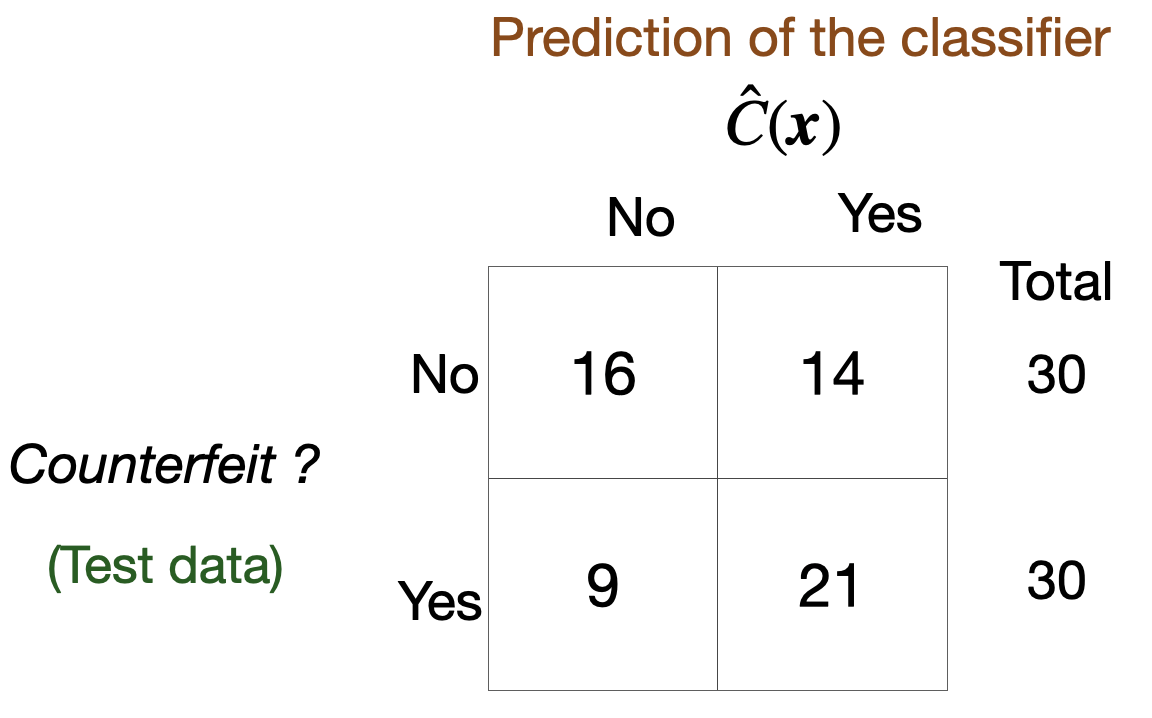

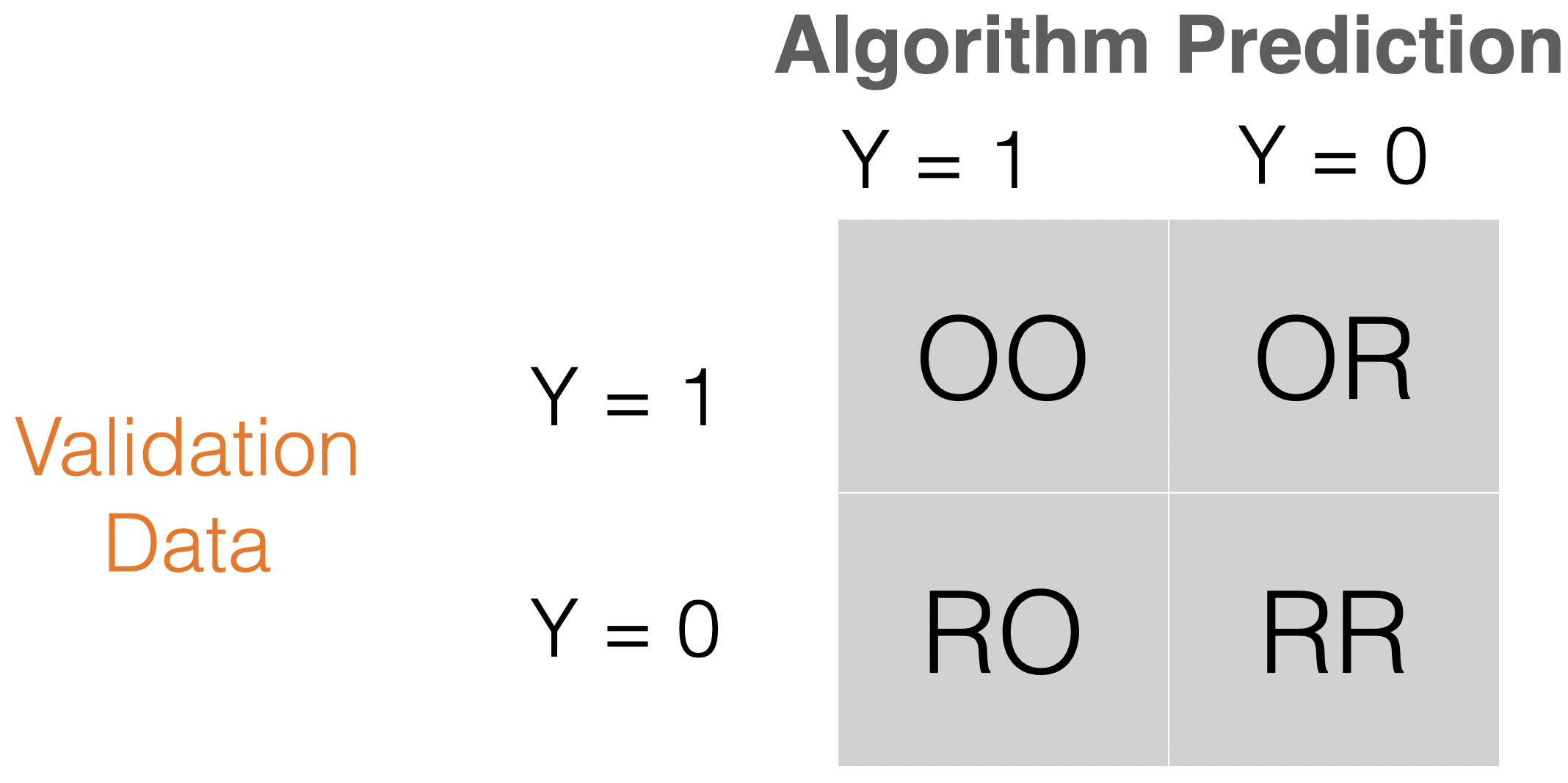

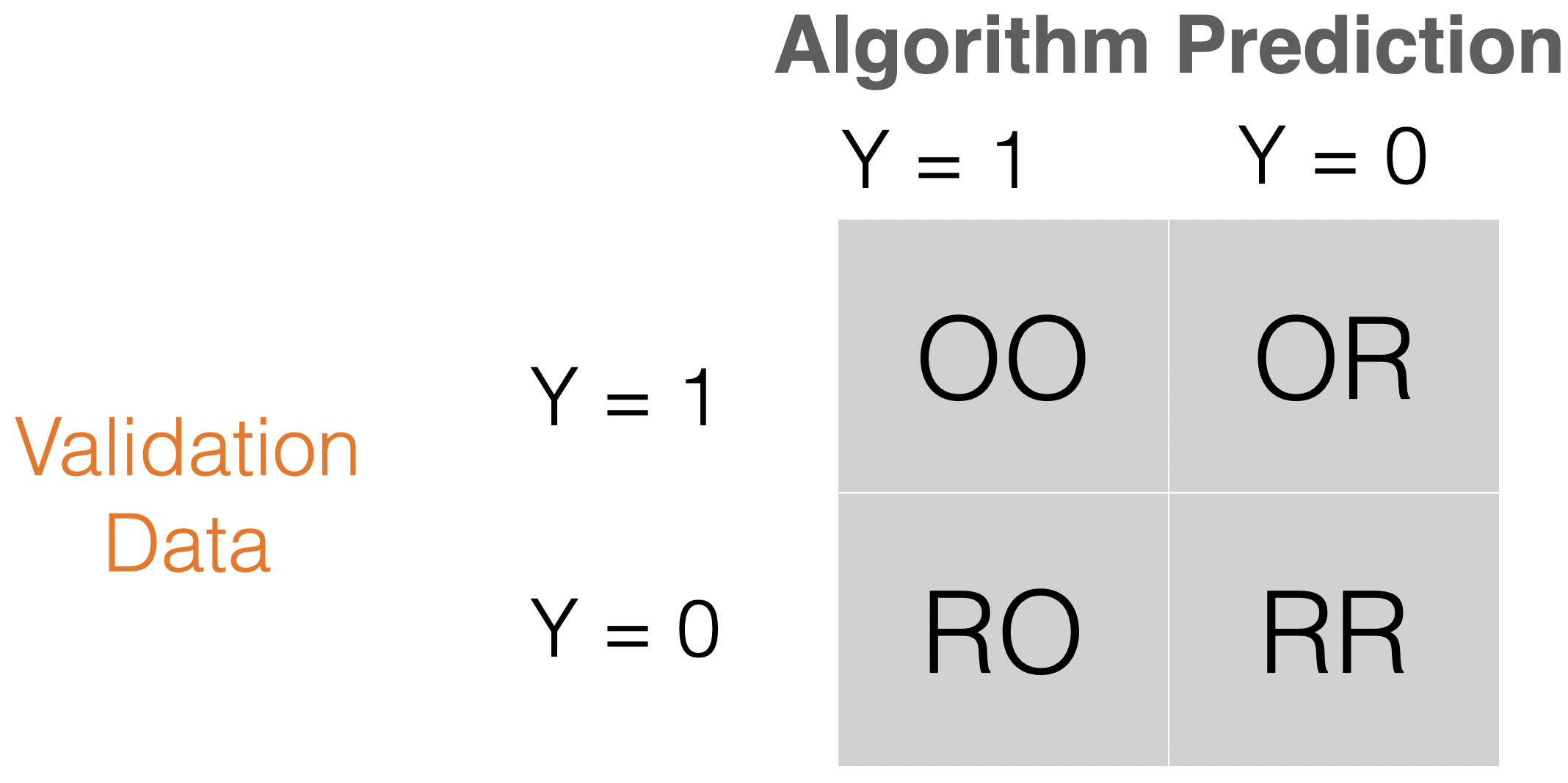

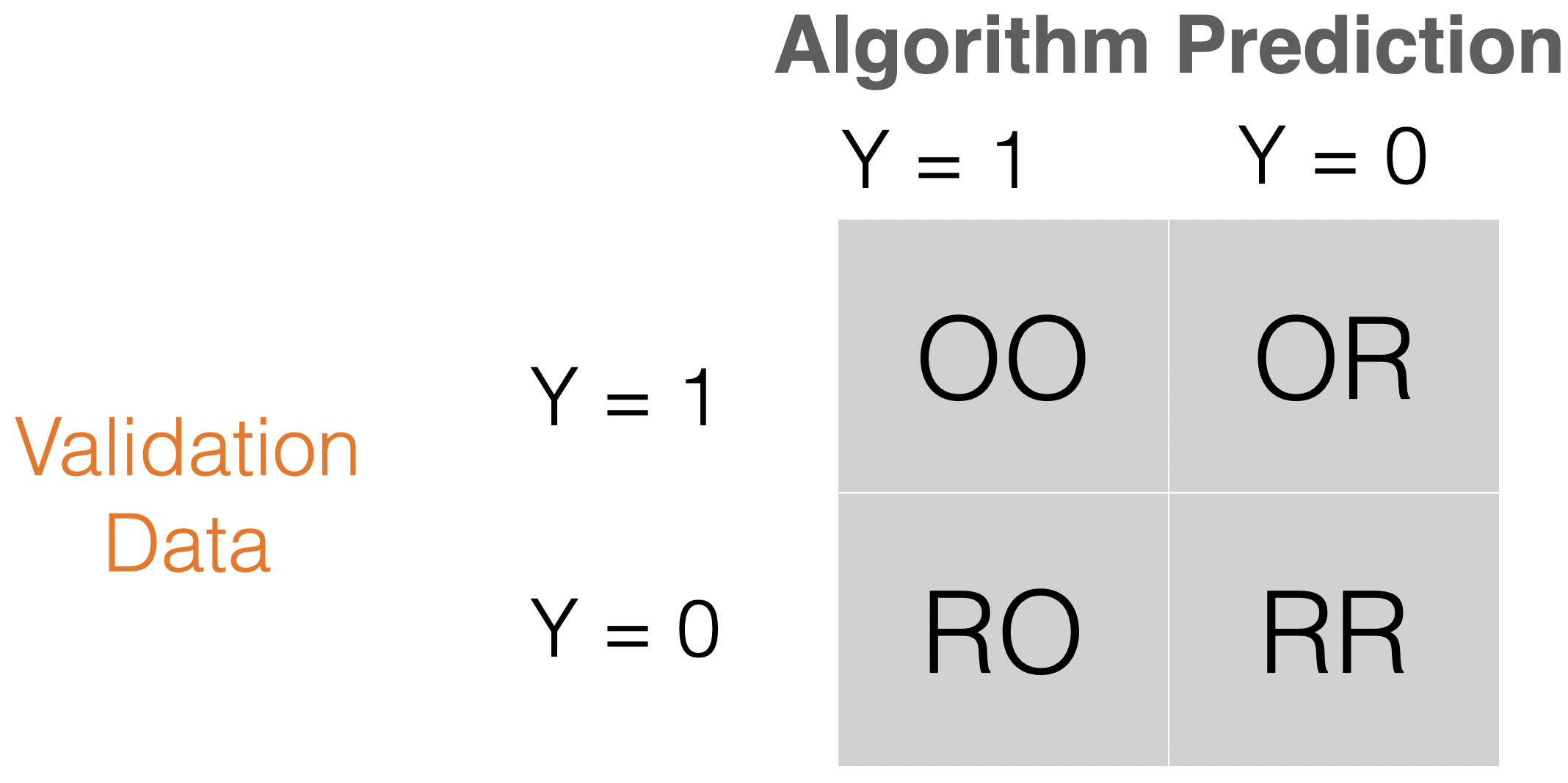

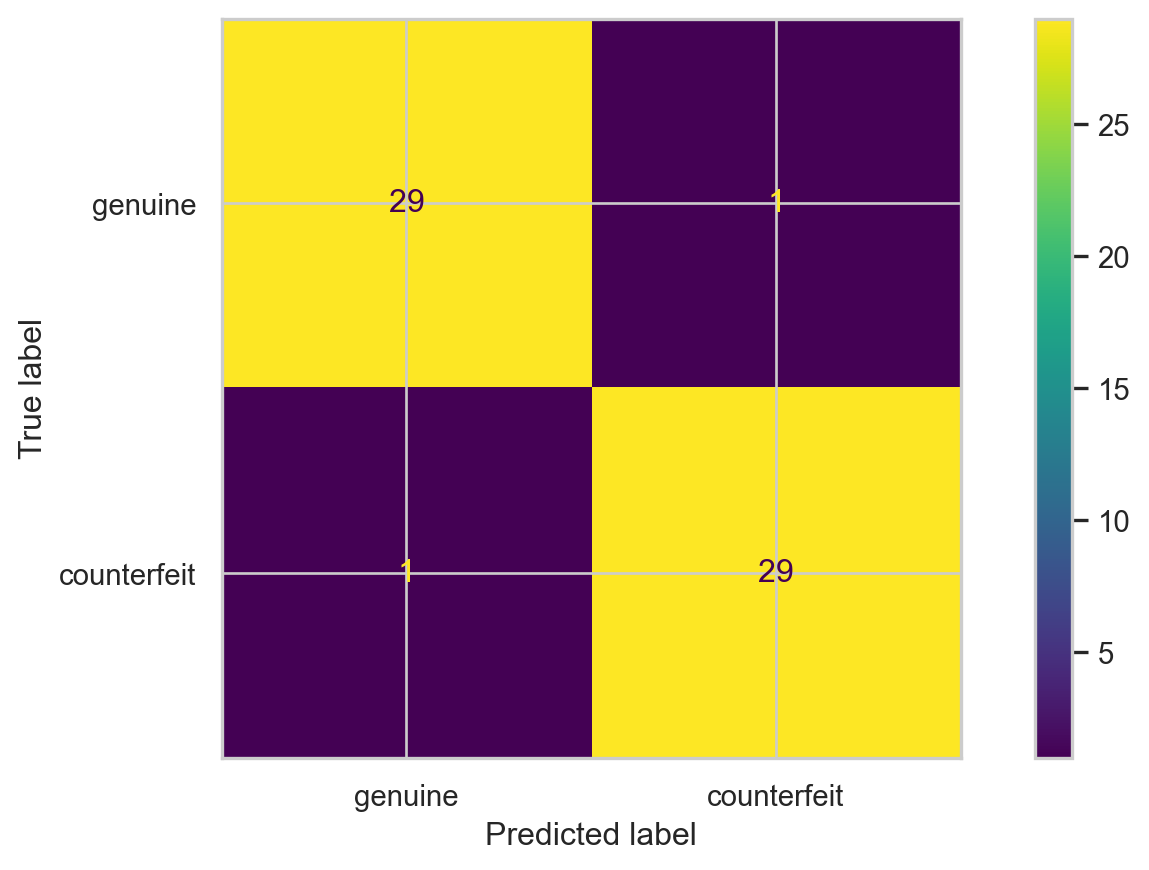

[0. , 1. ]])Confusion matrix

Table to evaluate the performance of a classifier.

Compares actual values with the predicted values of a classifier.

Useful for binary and multiclass classification problems.

In Python

Accuracy

It is a simple metric for summarizing the information in the confusion matrix. It is the proportion of correct classifications for both classes, out of the total classifications performed.

In Python, we calculate accuracy using the scikit-learn accuracy_score() function.

The higher the accuracy, the better the performance of the classification algorithm.

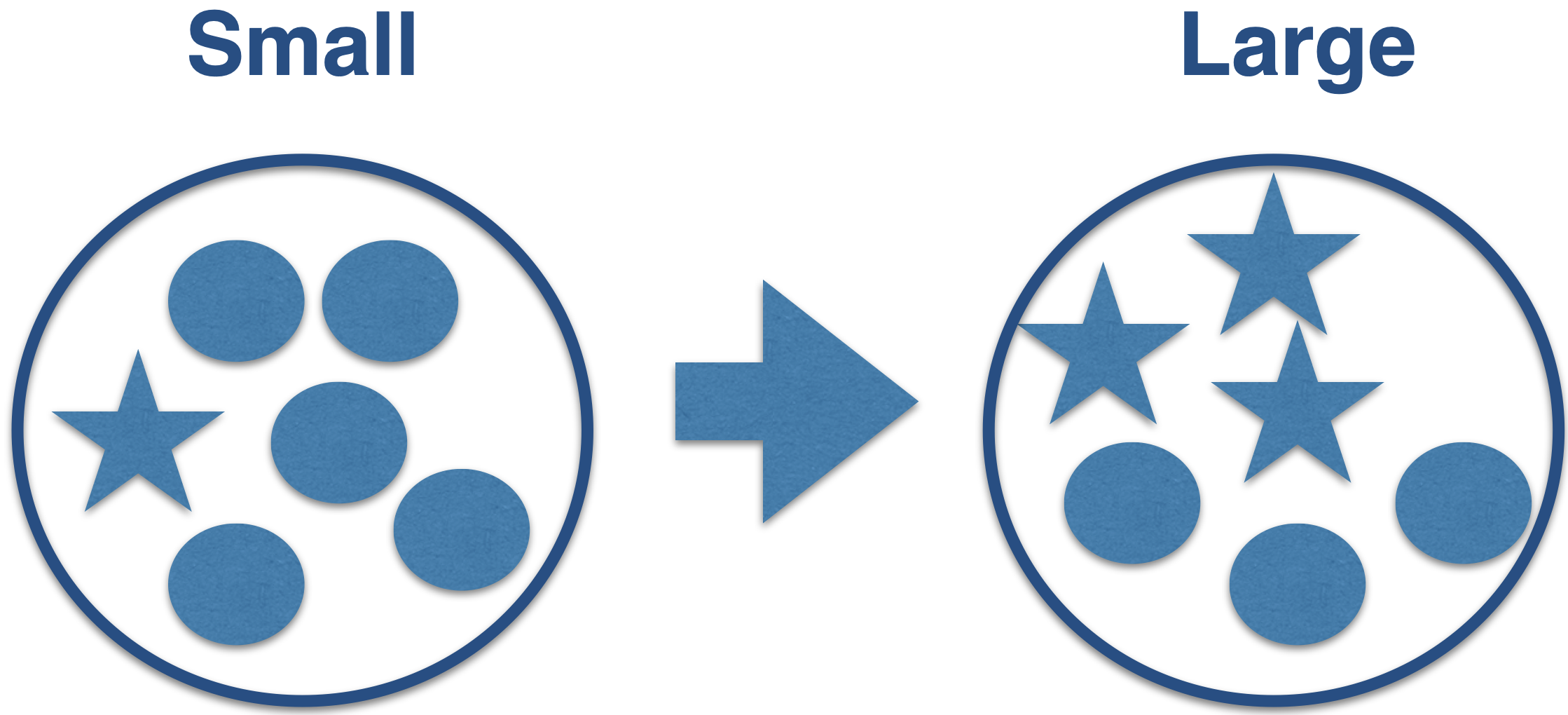

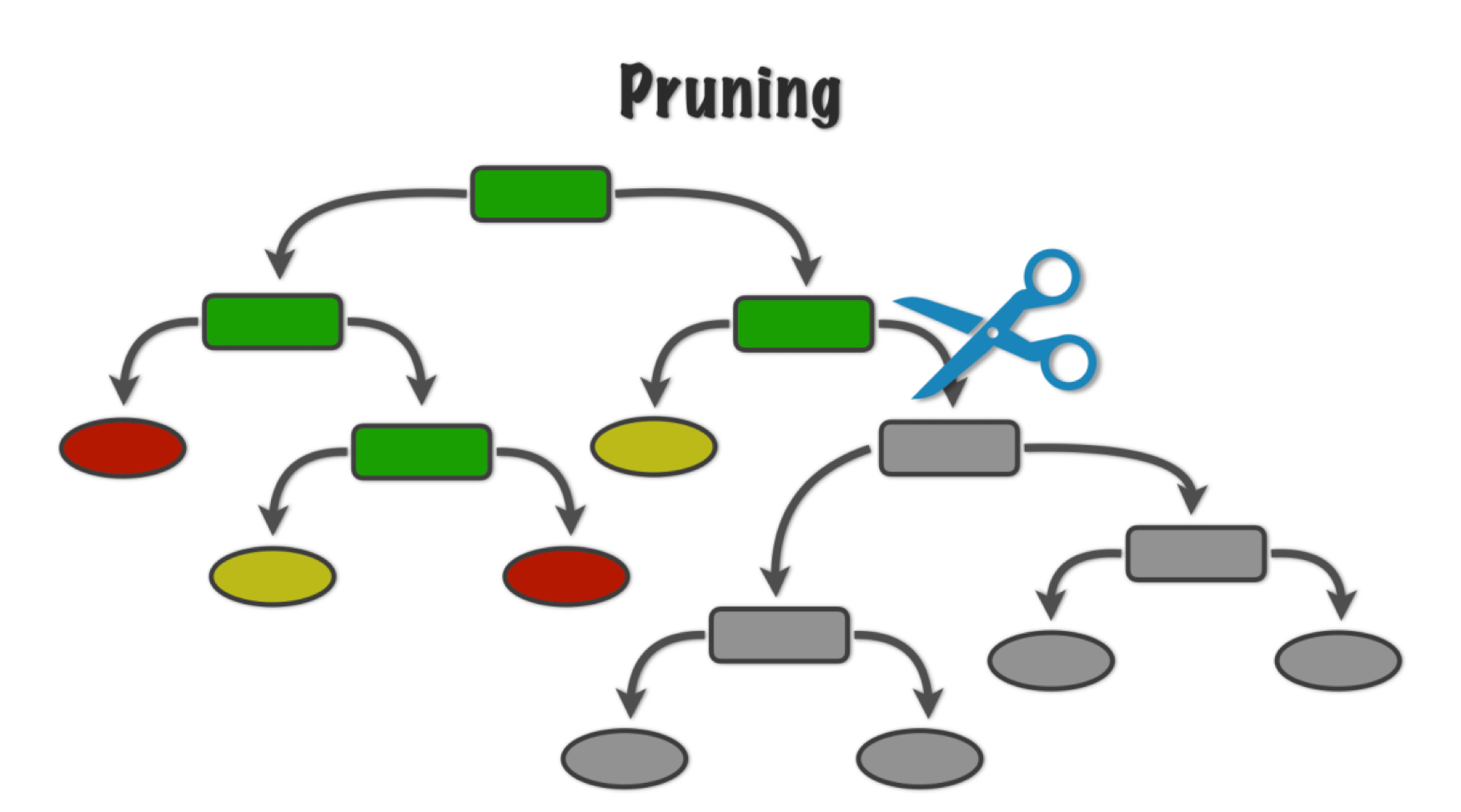

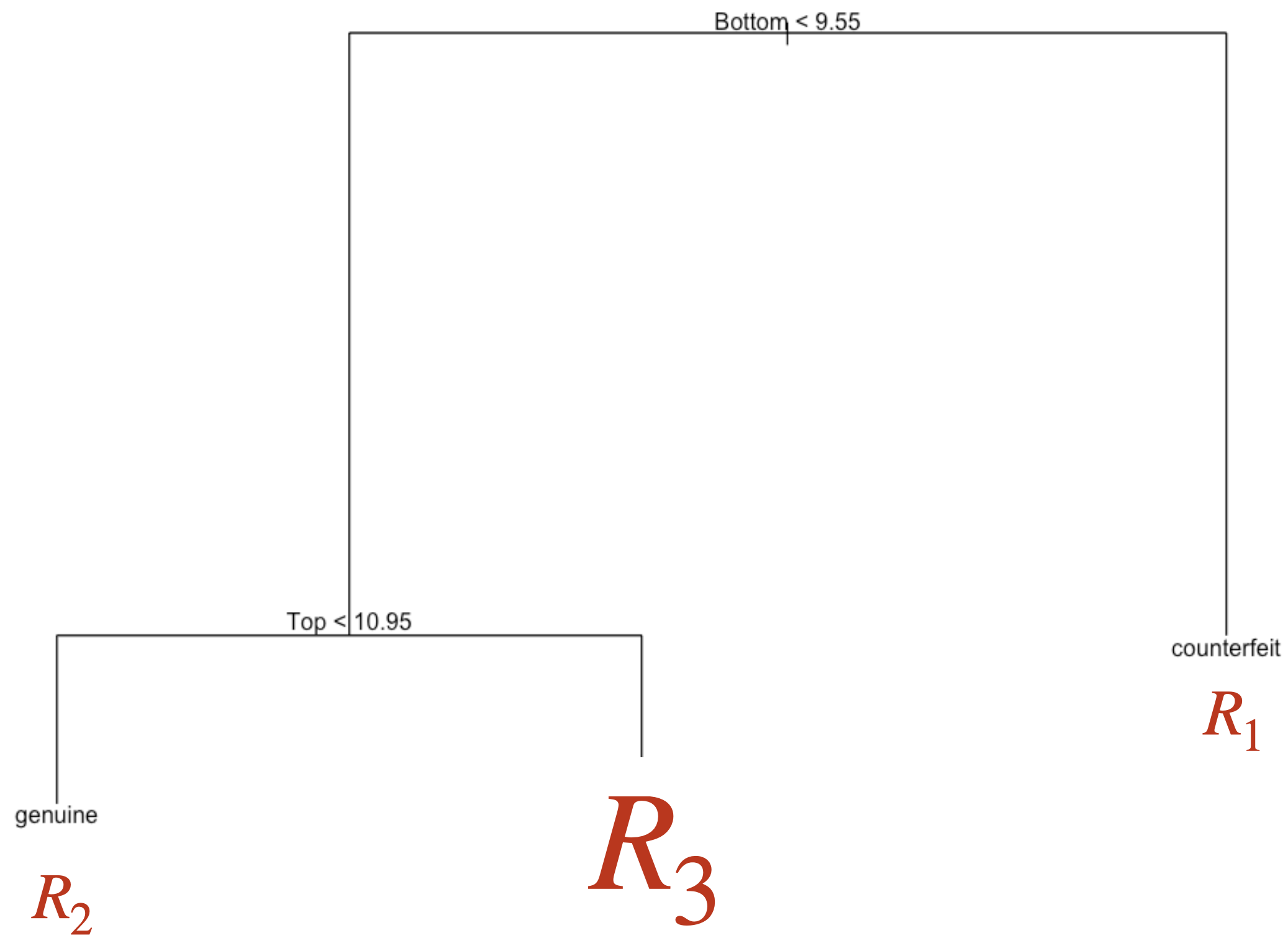

Pruning the tree

In some cases, we can optimize the performance of the tree by pruning it. That is, we collapse two internal (non-terminal) nodes.

To prune a tree, we use an advanced algorithm to measure the contribution of the tree’s branches.

This algorithm minimizes the following function:

\[\text{Missclassification rate of tree} + \alpha (\text{\# terminal nodes}),\]

where \(\alpha\) is a tuning parameter that places greater weight on the number of tree nodes (or size).

Large values of \(\alpha\) result in small trees with few nodes.

Small values of \(\alpha\) result in large trees with many nodes.

Apply penalty for large trees

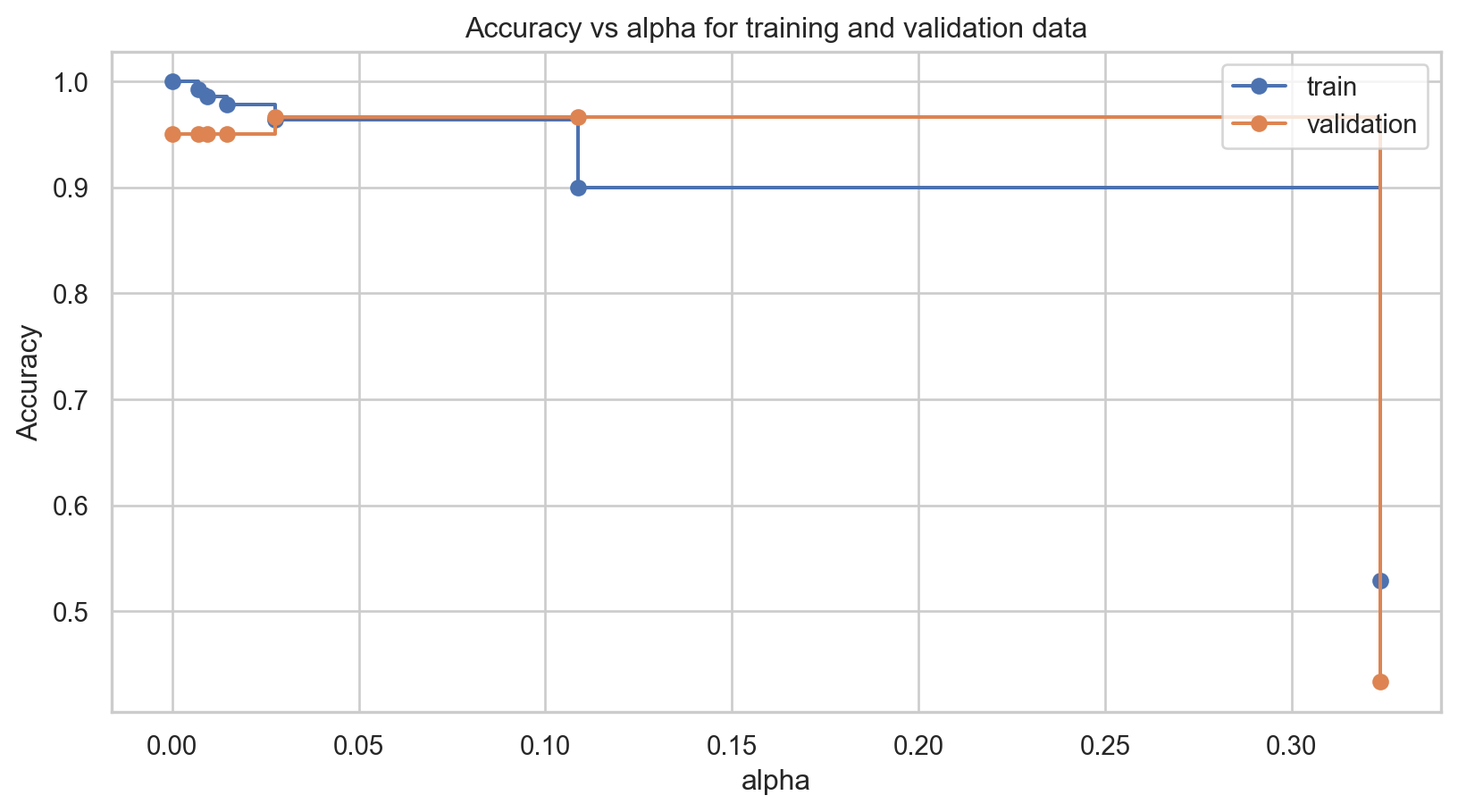

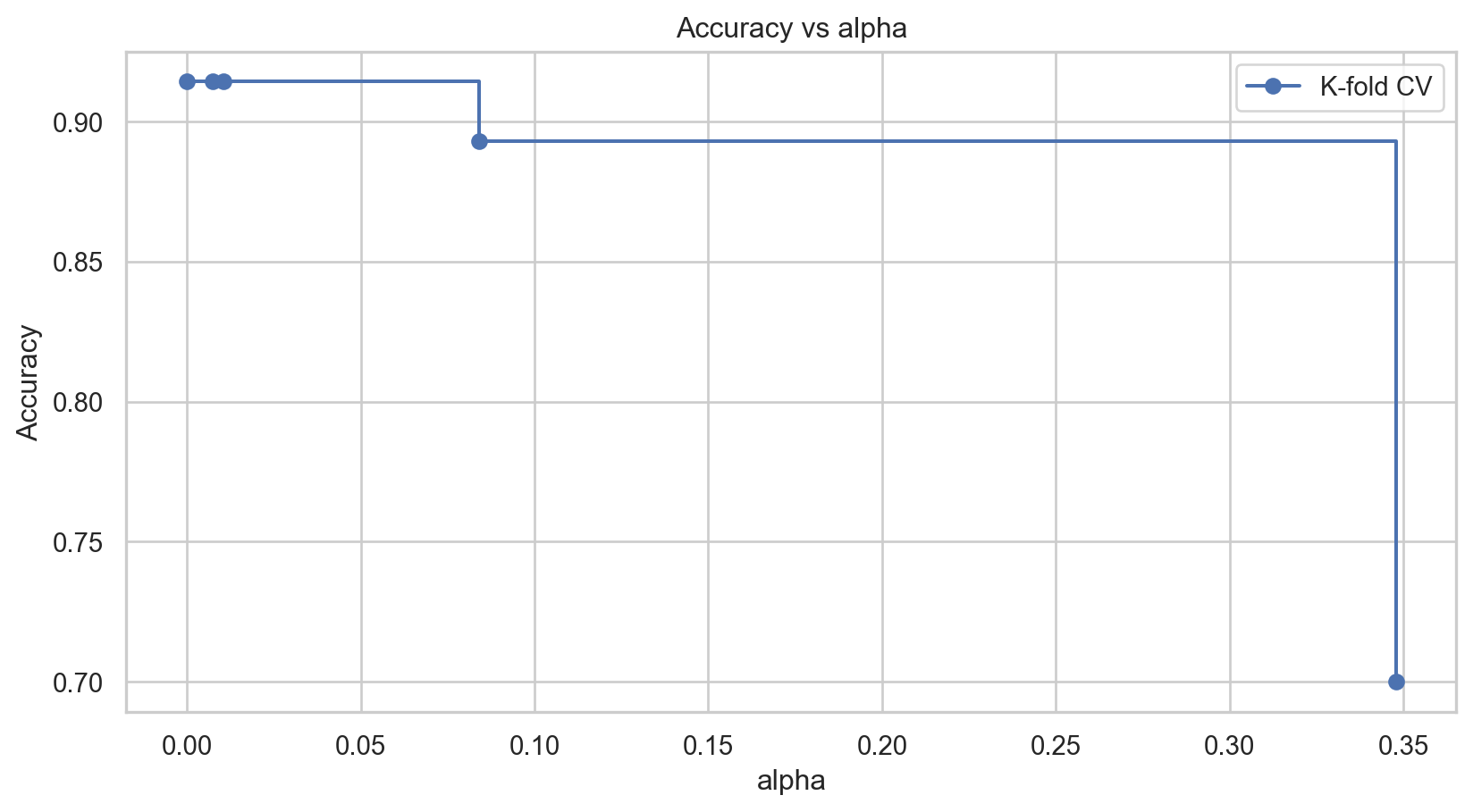

Now, let’s illustrate the pruning technique to find a small decision tree that performs well. To do this, we will train several decision trees using different values of \(\alpha\), which weighs the contribution of the tree branches.

In Python, this is achieved using the cost_complexity_pruning_path() function with the following syntax.

The ccp_alphas object contains the different values of the \(\alpha\) parameter used.

Cross-Validation

To compare the performance of the classification tree for the different values of \(\alpha\), we use 5-fold cross validation as follows.

# 1. Define the grid of parameters

param_grid = {'ccp_alpha': ccp_alphas}

# 2. Set the classification trees using 5-fold CV.

grid_search = GridSearchCV(

DecisionTreeClassifier(min_samples_leaf = 5, random_state = 507134),

param_grid,

cv = 5,

scoring = 'accuracy'

)

# 3. Train the classification algorithms

grid_search.fit(X_train, Y_train)Now, we extract the results from this process.

Best Alpha: 0.0The best_alpha is the optimal value of the cost complexity parameter (\(\alpha\)). The cv_scores shows the estimates of accuracy given by the 5-fold cross-validation.

We visualize the results using the following plot.

Choosing the best tree

We can see that the best \(\alpha\) value for the validation dataset is 0.

To train our new reduced tree, we use the DecisionTreeClassifier() function again with the ccp_alpha parameter set to the best \(\alpha\) value. The object containing this new tree is clf_simple.

Once this is done, we can visualize the small tree using the plot_tree() function.

The accuracy of the pruned tree is:

Class-Specific Metrics

An example

Let’s say we want to create a classifier that tells us whether a mobile phone company’s customer will churn next month.

Customers who churn significantly decrease the company’s revenue. That’s why it’s important to retain these customers.

To retain that customer, the company will send them a text message with an offer for a low-cost mobile plan.

Ideally, our classifier correctly identifies customers who will churn, so they get the offer and, hopefully, stay.

In other words, we want to avoid making wrong decisions about customers who will churn.

Wrong decisions about loyal customers aren’t as relevant.

Because if we classify a loyal customer as one who will churn, the customer will get a good deal. They’ll probably pay less but stay anyway.

Another example

Another example is developing an algorithm (classifier) that can quickly identify patients who may have a rare disease and need a more extensive and expensive medical evaluation.

The classifier must make correct decisions about patients with the rare disease, so they can be evaluated and eventually treated.

A healthy patient who is misclassified with the disease will only incur a few extra dollars to pay for the next test, only to discover that the patient does not have the disease.

Class-specific metrics

To overcome this limitation of accuracy, there are several class-specific metrics. The most popular are:

Sensitivity or recall

Precision

Type I error

These metrics are calculated from the confusion matrix.

Sensitivity or recall = OO/(OO + OR) “How many records of the target class did we predict correctly?”

Precision = OO/(OO + RO) How many of the records we predicted as target class were classified correctly?

In Python, we compute sensitivity and precision using the following commands:

Type I error = RO/(RO + RR) “How many of the reference records did we incorrectly predict as targets?”

Unfortunately, there is no simple command to calculate the type-I error in sklearn. To overcome this issue, we must calculate it manually.

Discussion

There is generally a trade-off between sensitivity and Type I error.

Intuitively, increasing the sensitivity of a classifier is likely to increase Type I error, because more observations are predicted as positive.

Possible trade-offs between sensitivity and Type I error may be appropriate when there are different penalties or costs associated with each type of error.

Disadvantages of decision trees

Decision trees have high variance. A small change in the training data can result in a very different tree.

It has trouble identifying simple data structures.

Return to main page

Tecnologico de Monterrey

Comments on accuracy

Accuracy is easy to calculate and interpret.

It works well when the data set has a balanced class distribution (i.e., cases 1 and 0 are approximately equal).

However, there are situations in which identifying the target class is more important than the reference class.

For example, it is not ideal for unbalanced data sets. When one class is much more frequent than the other, accuracy can be misleading.